Key Learning Points

| Key learning point | Link to detailed explanation | External reference link |

| AI works best when it supports bounded, repetitive verification tasks rather than replacing engineering judgement | Where AI helps most | [1], [2], [3] |

| The strongest early use cases are testbench assistance, assertion support, regression triage, coverage analysis, and debug clustering | What good use cases look like | [2], [3], [4] |

| AI becomes risky when teams use it without traceability, data discipline, or human review | Where AI hurts | [2], [5], [6] |

| A safe pilot needs a clear scope, measurable success criteria, and explicit human sign-off boundaries | How to pilot AI in design verification safely | [2], [5] |

| The objective is not full automation. It is better prioritisation, faster learning, and stronger decision confidence | What success should look like | [2], [5] |

Download the training slide deck

This article is also available as a downloadable PDF slide deck for engineers and verification teams reviewing practical “AI in DV” adoption.

[Download PDF: AI in Design Verification – Where It Helps, Where It Hurts, and How to Pilot Safely]

Introduction

AI in design verification is no longer a speculative topic. It now sits inside a practical engineering question: which parts of the verification flow benefit from machine assistance, and which parts still require direct human control? That question matters because design verification already operates under pressure from increasing design complexity, multi-domain integration, regression costs, and the ongoing difficulty of coverage closure. At the same time, structured verification environments such as UVM remain the operational backbone for much of mainstream DV, so any useful AI deployment has to work inside existing flows rather than outside them [1].

The useful way to evaluate AI in design verification is not to ask whether it can “do verification”. That framing is too loose to guide investment or process change. A better question is whether AI can reduce manual effort, improve prioritisation, surface missed patterns, or shorten debug cycles without weakening traceability or sign-off confidence. Recent research shows credible progress in automated UVM testbench generation, assertion generation, and coverage-guided refinement, but adoption remains limited by data quality, benchmarking, explainability, and workflow integration [2].

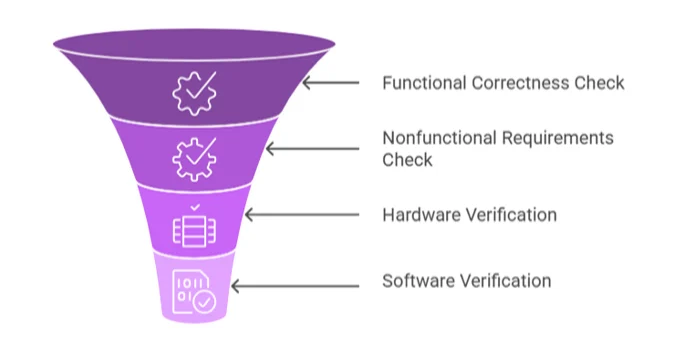

Figure 1: AI touchpoints across the verification loop. Source: Mohan et al., 2025

Figure 1 shows where AI can assist with planning, stimulus generation, regression prioritisation, coverage analysis, debug triage, and formal support, while leaving intent, waivers, exclusions, and sign-off under the engineer’s control. The visual should be a verification-loop diagram, not a decorative AI graphic. It should make clear that AI is inserted at defined workflow points around existing artefacts such as vPlans, testbenches, logs, coverage databases, and counterexamples. That framing is important because AI only becomes useful in DV when it strengthens an established verification discipline rather than bypassing it [1], [2].

Where AI helps most

The strongest AI use cases in design verification are the ones with narrow inputs, measurable outputs, and a clear feedback signal. That usually means tasks such as generating or refining testbench scaffolding, proposing assertions, ranking regressions, clustering failures, and identifying likely coverage gaps. These tasks already produce structured artefacts and historical datasets, making them more suitable for machine learning than for open-ended reasoning about the full design intent. The literature is increasingly consistent on this point: AI adds the most value where it supports dynamic verification workflows with data-backed prioritisation, not where it tries to replace the verification plan itself [2].

A useful example is the testbench and stimulus assistance. UVM remains central because it standardises reusable verification components and scalable environments across projects. Recent work, such as UVM, shows why AI attracts attention here: generating UVM scaffolding and iteratively refining stimuli using coverage feedback are among the most labour-intensive parts of functional verification. That does not mean the model understands the design at a sign-off level. It means it can reduce setup friction and accelerate early exploration when paired with constraints, syntax controls, and engineer review [1], [3].

Assertion support is another realistic early win. SystemVerilog assertions remain powerful because they encode intent close to protocol, control, and interface behaviour, but they are expensive to write and review at scale. Recent LLM work on SVA datasets shows that domain-adapted models can materially improve assertion-generation quality, especially when teams need privacy-preserving local fine-tuning rather than relying on a general external model. In practice, this makes AI more useful as an assertion assistant than as an autonomous verifier. The model can suggest properties, but engineers still need to validate semantics, assumptions, and completeness [4].

Regression and debug are also good candidates because they are already data-rich. Verification teams accumulate large volumes of logs, failure signatures, coverage deltas, and bug histories. ML can help sort, cluster, and prioritise that material more quickly than a manual pass through raw outputs. This is particularly valuable when several failures share a common root cause, or when a regression suite has grown large enough that indiscriminate execution wastes compute without improving confidence in proportion to the effort. The practical value here is not just speed. It is better at allocating attention [2].

What good use cases look like

A good AI use case in DV usually has four characteristics. It uses structured inputs, produces inspectable outputs, can be checked against an existing engineering baseline, and does not sit directly on the sign-off boundary. That is why pilot projects often work best in coverage review, failure triage, test ranking, or assertion suggestion. The moment a use case becomes ambiguous, weakly measurable, or too close to a release decision, risk rises sharply.

Coverage closure illustrates this boundary well. AI can help identify likely holes, correlate unhit scenarios, or draft candidate properties and stimuli. Recent work on agentic AI for formal coverage closure suggests that these workflows can improve productivity and more systematically close specific gaps. But even in that work, the real value lies in accelerating analysis and proposal generation, not in removing the need for engineering review. Coverage still requires reachability judgement, specification context, and disciplined waiver handling [5].

Where AI hurts

AI can hurt a verification programme by creating the appearance of progress without the underlying evidence. That usually happens in four ways. First, models can produce syntactically plausible but semantically wrong artefacts. An assertion can compile and still check the wrong thing. A generated UVM component can look structurally clean while encoding poor assumptions about the protocol. Second, historical data can bias the model toward the last design rather than the current one. Third, weak provenance can break traceability. Fourth, teams can confuse a productivity gain with a confidence gain [2].

This is why explainability and workflow fit matter more than raw model novelty. The 2025 review of ML in microelectronic design verification highlights barriers to adoption, including dataset limitations, benchmarking challenges, and implementation complexity. Recent formal and agentic verification work also highlights the need for guarded workflows rather than blind trust. In other words, the technical risk is not simply hallucination. The larger programme risk is weak engineering governance around generated artefacts [2], [5].

Cross-domain verification increases this risk. Modern projects often span digital, firmware, and mixed-signal elements. Accellera’s UVM-MS standard reflects the need for verification environments to bridge digital-centric UVM with AMS and DMS contexts. A model that performs acceptably in a narrow digital block flow may degrade badly when assumptions become timing-sensitive, analogue-aware, or dependent on system interactions outside the training distribution. That makes bounded pilots even more important in mixed-domain programmes [6].

How to pilot AI in design verification safely

A safe pilot starts with one bounded verification problem, not an ambition to automate the whole flow. The best first pilots are usually one of these: regression triage for a stable block, assertion suggestion for a known protocol family, coverage-gap ranking for a mature environment, or failure clustering for a noisy regression farm. Each of these has a clearer success signal than open-ended “AI for verification”.

The next requirement is measurement. A pilot should define success in engineering terms before the first model run. Useful measures include time saved in triage, reduction in redundant regressions, percentage of AI-suggested assertions accepted after review, or time-to-root-cause on repeated failure classes. Coverage uplift can be part of the scorecard, but only if paired with quality checks. Raw coverage movement without review of reachability and semantic value can mislead the team. This is consistent with both the ML review literature and recent formal coverage work, which frame AI as a productivity aid that still depends on sound judgment in verification [2], [5].

Data discipline should follow immediately after the scope definition. Teams need traceable logs, normalised metadata, stable naming, and version-aware datasets before they can expect a model to behave predictably. That requirement often determines whether a pilot succeeds more than model choice does. If the regression environment cannot reliably map failures to DUT revision, scenario, configuration, and known bug state, the model will learn noise. In many organisations, the real pilot work therefore starts with data preparation rather than model training. This is one reason why research repeatedly calls for better open datasets and common benchmarks in verification [2].

Human review boundaries must also be explicit. AI output may assist with ranking, drafting, or summarising. It should not waive coverage, approve properties, or support sign-off decisions without engineer validation. That is especially important in formal flows, where small specification mistakes propagate quickly. The safest operating model is simple: generated artefacts enter the same review path as human-authored artefacts until the team has hard evidence that a narrower automation boundary is justified [5].

What success should look like

A good pilot does not need to prove that AI can replace engineers. It needs to prove that a verification team can improve throughput or learning without weakening rigour. In most organisations, that means fewer wasted regressions, faster clustering of failures, better-quality suggestions for assertions or scaffolding, and clearer prioritisation of what to inspect next. If a pilot cannot show one of those outcomes, it has not yet earned expansion.

It also helps to separate productivity outcomes from assurance outcomes. Faster debug triage improves productivity. Better sign-off confidence is an improvement in assurance. The second follows only when the team can demonstrate that the AI-assisted process preserves traceability, review quality, and technical accountability. That distinction matters because verification is not a content-generation task. It is a confidence-building task tied directly to programme risk [2].

Conclusion

AI has a real place in design verification, but it is a bounded place. It works best where the task is repetitive, data-rich, and reviewable. It becomes risky when the task is ambiguous, under-specified, or too close to sign-off authority. The practical path forward is therefore neither blanket enthusiasm nor blanket rejection. It is disciplined piloting.

For verification leaders, the most useful question is not whether AI belongs in the flow. It is where the control boundary should sit. Teams that answer that question well will probably gain better prioritisation, faster debug learning, and more efficient use of experienced engineers. Teams that answer it poorly may get attractive artefacts, cleaner dashboards, and weaker confidence underneath them.

Design verification teams are increasingly being asked to evaluate AI not as a concept, but as a practical addition to existing workflows. The question is not whether to adopt AI, but where to introduce it without weakening traceability, coverage discipline, or sign-off confidence.

If you are assessing AI-assisted verification approaches within your organisation, a structured starting point is essential. This includes identifying bounded use cases, defining measurable outcomes, and ensuring alignment with your existing verification strategy.

- For a structured view of how AI can be introduced into engineering workflows, including integration with verification environments and decision-making processes, Alpinum’s AI Automation approach outlines how these methods are applied in practice:

https://alpinumconsulting.com/services/ai-in-dv/ - For engineers interested in practical implementation within verification contexts, including AI-assisted test generation and analysis workflows, relevant training material is available through Alpinum’s training programmes:

https://alpinumconsulting.com/services/training/

For teams looking to move beyond theory, the next step is not large-scale transformation. It is a controlled pilot aligned to real verification challenges, with clear ownership, measurable outcomes, and engineering accountability at every stage.

For a presentation-ready version of this material, download the full AI in Design Verification slide deck.

References

[1] Accellera Systems Initiative, “Universal Verification Methodology (UVM) Working Group,” Accellera, 2026. [Online]. Available through the Accellera UVM working group and UVM standard download pages.

[2] C. Bennett and K. Eder, “Review of Machine Learning for Micro-Electronic Design Verification,” arXiv, Mar. 2025.

[3] J. Ye et al., “From Concept to Practice: an Automated LLM-aided UVM Machine for RTL Verification,” arXiv, Apr. 2025.

[4] A. Menon et al., “Enhancing Large Language Models for Hardware Verification: A Novel SystemVerilog Assertion Dataset,” arXiv, Mar. 2025.

[5] S. Pothireddypalli et al., “Agentic AI-based Coverage Closure for Formal Verification,” arXiv, Mar. 2026.

[6] Accellera Systems Initiative, “UVM Mixed-Signal Standard v1.0,” Accellera, 2025.

Written by : Mike Bartley

Mike started in software testing in 1988 after completing a PhD in Math, moving to semiconductor Design Verification (DV) in 1994, verifying designs (on Silicon and FPGA) going into commercial and safety-related sectors such as mobile phones, automotive, comms, cloud/data servers, and Artificial Intelligence. Mike built and managed state-of-the-art DV teams inside several companies, specialising in CPU verification.

Mike founded and grew a DV services company to 450+ engineers globally, successfully delivering services and solutions to over 50+ clients.

Mike started Alpinum in April 2025 to deliver a range of start-of-the art industry solutions:

Alpinum AI provides tools and automations using Artificial Intelligence to help companies reduce development costs (by up to 90%!) Alpinum Services provides RTL to GDS VLSI services from nearshore and offshore centres in Vietnam, India, Egypt, Eastern Europe, Mexico and Costa Rica. Alpinum Consulting also provides strategic board level consultancy services, helping companies to grow. Alpinum training department provides self-paced, fully online training in System Verilog, UVM Introduction and Advanced, Formal Verification, DV methodologies for SV, UVM, VHDL and OSVVM and CPU/RISC-V. Alpinum Events organises a number of free-to-attend industry events

You can contact Mike (mike@alpinumconsulting.com or +44 7796 307958) or book a meeting with Mike using Calendly (https://calendly.com/mike-alpinum-consulting).

Stay Informed and Stay Ahead

Latest Articles, Guides and News

Explore related insights from Alpinum that dive deeper into design verification challenges, practical solutions, and expert perspectives from across the global engineering landscape.