On 24 March 2026, Arm Holdings announced its first in-house data centre processor, the Arm AGI CPU. This marks a strategic shift from licensing IP to delivering complete silicon platforms. This also reflects Arm’s expansion into production silicon as part of its broader compute platform strategy.

The processor is designed for agentic AI workloads, where systems must coordinate reasoning, planning, and execution across multiple accelerators. These workloads require sustained CPU orchestration rather than relying solely on accelerator throughput.

Arm reports more than 2× performance per rack compared to current x86-based systems. This is enabled by a high-density architecture featuring 136 Neoverse V3 cores per CPU, with a capacity of over 45,000 cores per rack in liquid-cooled deployments. The processor is manufactured by Taiwan Semiconductor Manufacturing Company (TSMC) using a 3nm process, supporting improved efficiency and compute density.

Meta is the lead partner and initial deployment customer, with additional ecosystem support from OpenAI, Cloudflare, SAP, and SK Telecom. Arm will also provide board and rack-level designs through the Open Compute Project, working with server vendors including Lenovo, ASRock Rack, and Supermicro.

System-Level Implications for Data Centre Architecture

The introduction of an in-house CPU changes how data centre systems are architected. Control is no longer distributed across multiple vendors. Instead, a single provider can define CPU behaviour, interconnect strategy, and system-level integration.

This has direct implications for verification scope and architectural decision-making. As AI workloads scale, system-level design and verification approaches become essential to ensure predictable behaviour across tightly coupled compute resources.

Verification and Integration Challenges at Scale

As compute density increases, verification challenges extend beyond individual components. The interaction between CPUs, accelerators, memory systems, and interconnects must be validated under realistic conditions.

This requires a shift towards a risk-based verification strategy, in which effort is prioritised based on system complexity and the potential impact of failure. At the same time, teams must manage system-scale programme risk in verification, ensuring that integration behaviour is understood before deployment.

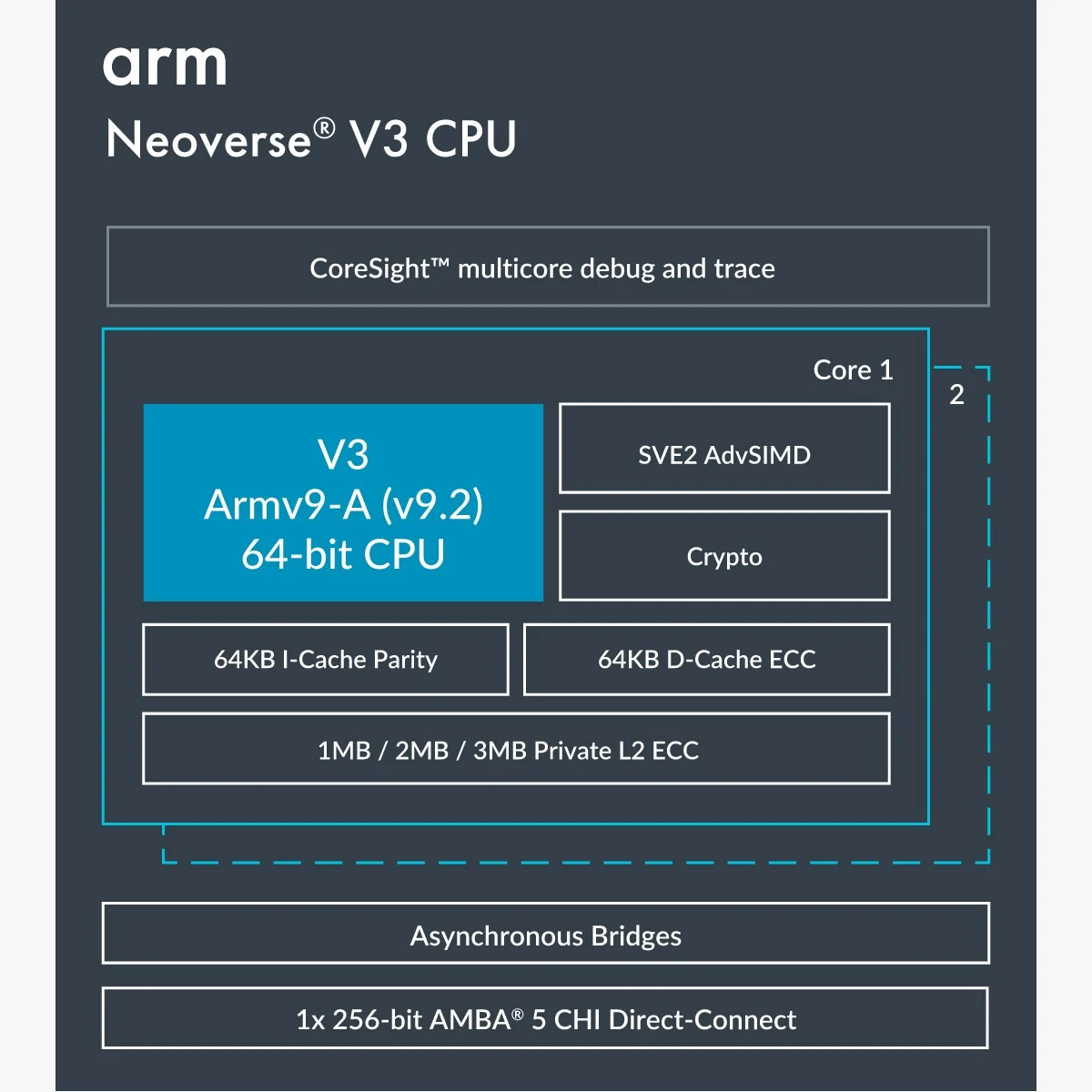

Neoverse V3 Architecture Context

The architectural structure of the Neoverse V3 core highlights the system-level design considerations required for high-density AI compute. It reflects how compute, cache, and interconnect elements are organised to support sustained throughput across large-scale deployments.

Figure 1: Arm Neoverse V3 CPU architecture. Source: Arm Holdings

Conclusion

Arm’s AGI CPU represents more than a new processor. It reflects a shift towards vertically integrated data centre platforms designed for AI-driven workloads. This changes not only performance expectations, but also how systems are architected, verified, and deployed at scale. This introduces new system-level considerations for architecture, integration, and verification in AI-scale data centres.

Explore related engineering insights and approaches

- Verification capability benchmarking and engineering maturity

- Scaling FPGA-based systems and compute architectures

- AI infrastructure and system architecture trends

Explore training and engineering support

Written by : Mike Bartley

Mike started in software testing in 1988 after completing a PhD in Math, moving to semiconductor Design Verification (DV) in 1994, verifying designs (on Silicon and FPGA) going into commercial and safety-related sectors such as mobile phones, automotive, comms, cloud/data servers, and Artificial Intelligence. Mike built and managed state-of-the-art DV teams inside several companies, specialising in CPU verification.

Mike founded and grew a DV services company to 450+ engineers globally, successfully delivering services and solutions to over 50+ clients.

Mike started Alpinum in April 2025 to deliver a range of start-of-the art industry solutions:

Alpinum AI provides tools and automations using Artificial Intelligence to help companies reduce development costs (by up to 90%!) Alpinum Services provides RTL to GDS VLSI services from nearshore and offshore centres in Vietnam, India, Egypt, Eastern Europe, Mexico and Costa Rica. Alpinum Consulting also provides strategic board level consultancy services, helping companies to grow. Alpinum training department provides self-paced, fully online training in System Verilog, UVM Introduction and Advanced, Formal Verification, DV methodologies for SV, UVM, VHDL and OSVVM and CPU/RISC-V. Alpinum Events organises a number of free-to-attend industry events

You can contact Mike (mike@alpinumconsulting.com or +44 7796 307958) or book a meeting with Mike using Calendly (https://calendly.com/mike-alpinum-consulting).

Stay Informed and Stay Ahead

Latest Articles, Guides and News

Explore related insights from Alpinum that dive deeper into design verification challenges, practical solutions, and expert perspectives from across the global engineering landscape.