Introduction

Verification planning is often treated as an administrative step. In practice, it is the primary control mechanism for achieving confidence in sign-off. When verification planning is weak, teams compensate with more tests, more regressions, and more compute. This rarely improves confidence. It increases activity without improving evidence. Effective verification planning establishes how requirements will be translated into measurable, observable, and provable behaviours. It defines how coverage will be interpreted and how closure decisions will be made [1].

The central problem is not coverage collection. It ensures that the coverage reflects the real design intent.

Five Key Learning Points

| Key learning point | Link to detailed explanation | External reference |

| Requirements must map to measurable verification artefacts | Requirements for Coverage Traceability | [2] |

| Coverage is multi-dimensional, not a single percentage | Coverage is Not a Single Number | [1] |

| Formal and simulation must be planned together | Integrating Formal and Simulation | [1] |

| Coverage closure is an iterative, prioritised process | Closing Coverage Gaps Systematically | [1] |

| Observability through assertions is critical for sign-off confidence | Observability and Assertion Strategy | [1] |

Requirements for Coverage Traceability

A working verification plan starts with a structured mapping between specification and verification artefacts.

This mapping must connect:

- Requirements for features

- Features to assertions and checkers

- Assertions to coverage points

- Coverage points to tests

The Accellera case study explicitly states that verification plans link features to cover points, checkers, and test cases, enabling measurable tracking against the plan rather than against activity [2].

Why this matters

Without traceability:

- Coverage cannot be interpreted

- Missing behaviour cannot be identified

- Sign-off becomes subjective

With traceability:

- Every requirement has measurable evidence

- Progress can be tracked against intent

- Coverage gaps are meaningful

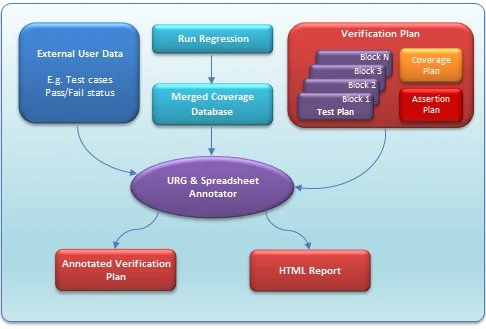

Figure 1: Verification planning integrates requirements, regression execution, and coverage analysis into a closed-loop system that drives coverage closure.Source: design-reuse.com

Figure 1 illustrates how verification planning operates as a closed-loop system rather than a linear mapping. Requirements are decomposed into verification plans and test cases, which feed regression execution. Coverage data is continuously merged and analysed, providing feedback into the planning process. This feedback loop enables teams to identify coverage gaps, refine stimulus, and adjust verification intent. The inclusion of reporting and coverage-merging stages highlights that closure is driven by measured evidence rather than by test execution alone.

This mapping is not optional. It is the foundation of metric-driven verification. The Accellera flow shows that planning defines how coverage and assertions will be constructed and tracked throughout execution [2].

Coverage is Not a Single Number

Coverage is often reported as a percentage. This is insufficient and potentially misleading.

The DesignCon study highlights that coverage is a multi-dimensional space including:

- Structural coverage such as line and toggle

- Functional coverage representing behaviour

- Assertion coverage representing observability [1]

Implications for planning

A single coverage number cannot represent verification completeness because:

- Structural coverage does not guarantee functional correctness

- Functional coverage does not guarantee detection of incorrect behaviour

- Assertion coverage may expose issues missed by both

Verification planning must define:

- Which coverage dimensions are required

- How each dimension contributes to sign-off

- Acceptable thresholds for each metric

This is a system-level decision, not a tooling decision.

Integrating Formal and Simulation

There is no single verification approach that guarantees closure. Simulation provides scalability but limited completeness. Formal provides exhaustiveness but limited capacity. The DesignCon work emphasises that closure depends on using formal to assist simulation and simulation to assist formal [1].

Planning implications

Verification planning must define:

- Which blocks are suitable for formal

- Which behaviours require simulation

- Where hybrid approaches are required

For example:

- Control logic benefits from formal proof

- Interface correctness benefits from assertions

- Complex data transformations rely on simulation

This is not a tool selection problem. It is a planning decision based on design characteristics.

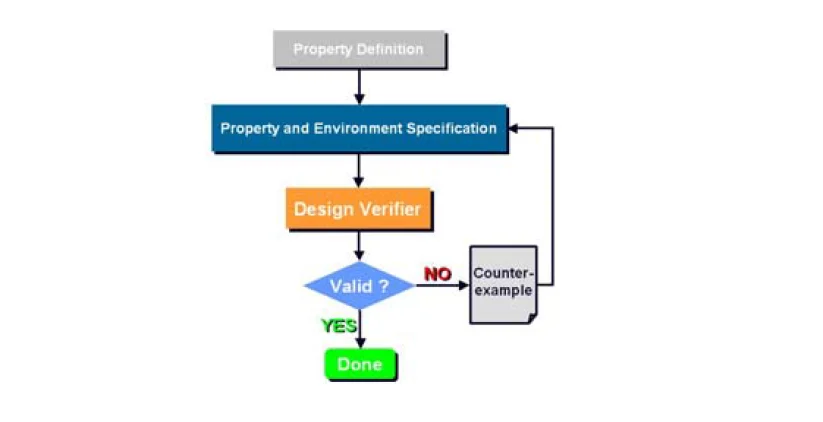

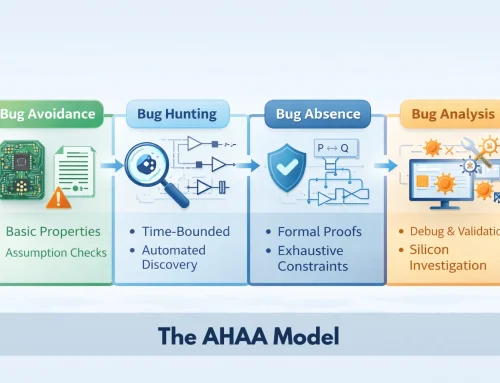

Figure 2: Formal and simulation complement each other by covering gaps in each approach [1]. Source: researchgate.net

Figure 2 illustrates how formal verification operates as a property-driven feedback loop. Properties derived from design intent are evaluated against the implementation. When violations occur, counterexamples are generated, providing precise debug insight. This process complements simulation by identifying corner cases that are difficult to reach through stimulus alone. In a verification planning context, this enables targeted closure of coverage gaps and improves confidence in control-heavy logic where exhaustive exploration is required.

Closing Coverage Gaps Systematically

The closure process

Coverage closure is iterative and resource-constrained. It follows a structured loop:

- Simulate and collect coverage

- Identify coverage holes

- Rank holes by criticality

- Target holes using constraints or directed tests

- Refine the coverage model

This loop continues until progress slows and further effort no longer justifies the return [1].

What distinguishes effective planning

Effective verification planning:

- Defines closure criteria early

- Classifies coverage gaps by risk

- Identifies when to stop

Without this, teams continue chasing diminishing returns late in the schedule.

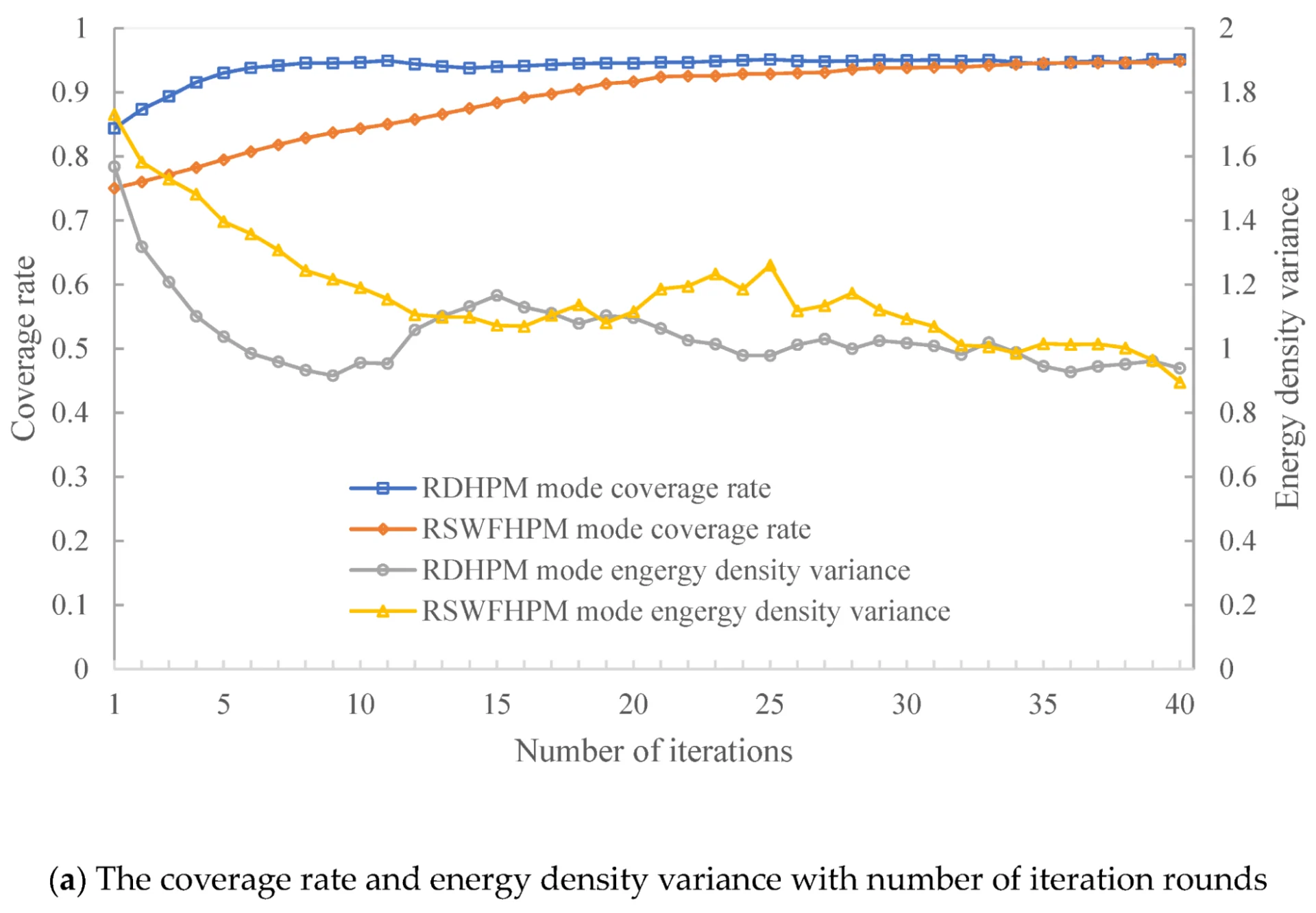

Figure 3: Coverage closure progresses from large gaps to smaller, harder-to-close holes requiring disproportionate effort. Source: mdpi.com

Figure 3 illustrates the non-linear nature of coverage closure. Early in the verification cycle, broad stimuli and basic scenarios quickly close large coverage gaps. As verification progresses, remaining gaps correspond to corner cases, rare conditions, or hard-to-observe behaviours. These require directed stimulus, constraint refinement, or formal techniques to close. This behaviour introduces diminishing returns, making it necessary to prioritise coverage holes based on risk rather than attempting to close all metrics uniformly.

The coverage closure phase described in DesignCon illustrates how progress slows as remaining gaps become increasingly complex [1].

Observability and Assertion Strategy

Verification planning often overemphasises stimulus generation. This addresses controllability. However, correctness depends equally on observability. Observability ensures that incorrect behaviour is detected. Assertion density is identified as a key metric for measuring observability across the design [1].

Planning implications

Verification plans must define:

- Assertion strategy

- Checker coverage

- Observability metrics

Without sufficient observability:

- Bugs remain undetected

- Coverage appears complete but is misleading

- Sign-off confidence is weak

Sign-off Planning and Decision Confidence

Sign-off is not triggered by coverage reaching a predefined threshold. It is an engineering decision based on evidence, risk, and the level of confidence achieved across the design. The Accellera methodology structures this process through milestone-based planning, progressing through stages such as pre-alpha, alpha, beta, and final verification, where each stage represents increasing verification completeness and a corresponding reduction in risk [2]. This staged approach provides a controlled framework for assessing readiness, rather than relying on a single coverage metric to justify closure.

For sign-off planning to be effective, the criteria must be explicitly defined from the outset. This includes aligning coverage targets directly with requirements, identifying acceptable levels of residual risk, and specifying the evidence required to support closure decisions. When these elements are clearly established and tracked throughout the verification lifecycle, sign-off becomes a defensible outcome grounded in measurable verification results, rather than an assumption driven by schedule or incomplete indicators of progress.

Constraints, Trade-offs, and Risks

Verification planning operates within real and unavoidable constraints. These include schedule pressure driven by project timelines, compute limitations that restrict regression scale, tool capacity that affects throughput and analysis depth, and incomplete or evolving specifications that introduce uncertainty into verification intent.

Within this context, trade-offs are not optional and must be made explicit at the planning stage. Decisions typically involve balancing full proof against acceptable confidence, determining how far coverage completeness can be pursued within schedule limits, and choosing between abstraction for scalability and fidelity for accuracy. These decisions directly influence verification effectiveness and must be consciously managed rather than deferred.

The DesignCon work makes it clear that no single verification approach applies universally across all designs [1]. As a result, verification planning must explicitly define where effort delivers the highest return in terms of risk reduction and confidence. This is a system-level decision that depends on design characteristics, project constraints, and verification objectives.

Conclusion

Verification planning that actually works is defined by its ability to produce measurable, traceable, and defensible evidence of correctness. It is not defined by the volume of tests executed or coverage numbers reported, but by how clearly verification intent is translated into verifiable outcomes. At its core, an effective approach ensures that requirements are directly mapped to coverage and assertions, that coverage is treated as a multi-dimensional construct rather than a single metric, and that formal and simulation techniques are integrated in a complementary manner. It also requires a structured closure methodology that guides teams through diminishing returns and an explicit observability strategy to ensure that incorrect behaviour is reliably detected.

When these elements are in place, coverage gains meaning as evidence rather than activity, and sign-off becomes an informed engineering decision based on risk and proof, rather than a milestone driven by schedule.

Continue Exploring

For teams seeking to improve verification planning discipline and strengthen coverage-to-sign-off traceability:

👉 Design verification training: https://alpinumconsulting.com/services/training/design-verification-for-sv-uvm-training/

👉 Verification services overview:

https://alpinumconsulting.com/services/designverification/

Upcoming semiconductor and verification training programmes

👉 https://alpinumconsulting.com/services/training/

References

[1] H. Foster and P. Yeung, “Planning Formal Verification Closure,” in Proceedings of DesignCon 2007, 2007. Available: https://www.researchgate.net/publication/290178578_Planning_formal_verification_closure

[2] P. Kaunds, R. Bothe, and J. Johnson, “Case Study of Verification Planning to Coverage Closure at Block, Subsystem and System on Chip Level,” in DVCon Europe 2018 Proceedings, Accellera Systems Initiative, 2018. Available: https://dvcon-proceedings.org/wp-content/uploads/Case-Study-of-Verification-Planning-to-Coverage-Closure-@Block-Subsystem-and-System-on-Chip-Level.pdf

Written by : Mike Bartley

Mike started in software testing in 1988 after completing a PhD in Math, moving to semiconductor Design Verification (DV) in 1994, verifying designs (on Silicon and FPGA) going into commercial and safety-related sectors such as mobile phones, automotive, comms, cloud/data servers, and Artificial Intelligence. Mike built and managed state-of-the-art DV teams inside several companies, specialising in CPU verification.

Mike founded and grew a DV services company to 450+ engineers globally, successfully delivering services and solutions to over 50+ clients.

Mike started Alpinum in April 2025 to deliver a range of start-of-the art industry solutions:

Alpinum AI provides tools and automations using Artificial Intelligence to help companies reduce development costs (by up to 90%!) Alpinum Services provides RTL to GDS VLSI services from nearshore and offshore centres in Vietnam, India, Egypt, Eastern Europe, Mexico and Costa Rica. Alpinum Consulting also provides strategic board level consultancy services, helping companies to grow. Alpinum training department provides self-paced, fully online training in System Verilog, UVM Introduction and Advanced, Formal Verification, DV methodologies for SV, UVM, VHDL and OSVVM and CPU/RISC-V. Alpinum Events organises a number of free-to-attend industry events

You can contact Mike (mike@alpinumconsulting.com or +44 7796 307958) or book a meeting with Mike using Calendly (https://calendly.com/mike-alpinum-consulting).

Stay Informed and Stay Ahead

Latest Articles, Guides and News

Explore related insights from Alpinum that dive deeper into design verification challenges, practical solutions, and expert perspectives from across the global engineering landscape.