Lessons from DVClub Bristol January 2026 for System-Level Confidence

Introduction

CPU processor verification has become the central technical constraint in modern semiconductor programmes. As processor architectures scale in complexity and integrate AI-centric functionality, verification effort increasingly determines delivery confidence, cost, and schedule risk. Presentations at DVClub Bristol 2026 provided a grounded technical perspective across RISC-V processors, AI-driven EDA evolution, and scalable verification infrastructure. Taken together, these insights show a structural shift in how verification must be approached at the system scale.

Five Key Learning Points

| Key learning point | Link to detailed explanation | External reference |

| Processor verification is now the dominant complexity driver in SoC design | Processor Verification at Scale | Dave Kelf – A Layered, Graph-based Approach to RISC-V Verification [1] |

| Verification must move from block correctness to system-level integrity | System-Level Verification as the New Baseline | DVClub Bristol processor verification presentations [1] |

| AI is shifting verification from test generation to search and optimisation | AI in Verification Workflows | Simon Davidmann – AI and EDA for DV Engineers [2] |

| Near-memory AI accelerators introduce new ordering and coverage risks | Verification Challenges in Near-Memory AI Architectures | Vinayak V P – Verification Challenges for RISC-V Near-Memory AI Accelerators [3] |

| Open, scalable verification flows are becoming essential for accessibility | Open and Scalable Verification Infrastructure | Andy Bond – Open-source AVL and Python-based testbenches [4] |

Processor Verification at Scale

From Instruction Correctness to Architectural State Space

Application-class processors represent the most demanding verification targets in digital design.

State-space growth arises from instruction combinations, privilege transitions, memory ordering, and software interaction, creating verification search spaces that extend far beyond controller-class assumptions.

Even a single processor core may require extensive execution exploration to establish behavioural confidence, with verification investment dominating engineering costs. RISC-V flexibility further expands this space through diverse instruction encodings, argument permutations, and software execution paths, all of which must be validated across architectural and system contexts [1]. The implication is direct: verification is no longer a supporting activity but the primary determinant of programme certainty.

System-Level Verification as the New Baseline

Layered Integrity Across Hardware and Software

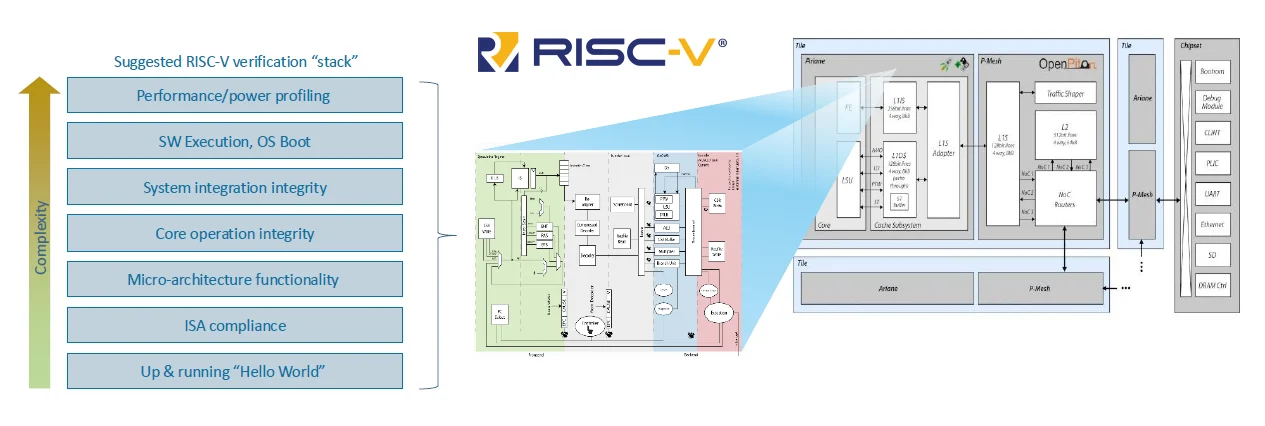

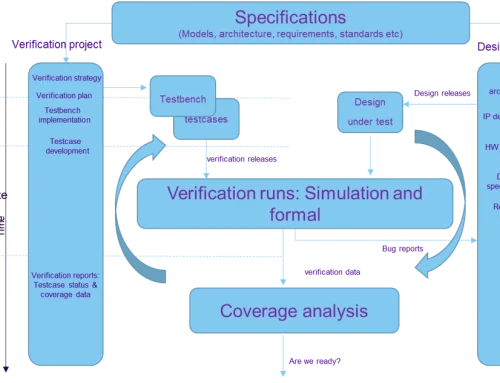

Modern RISC-V verification must progress through layered assurance stages, beginning with ISA compliance and extending through micro-architecture correctness, system integration, operating system execution, and performance behaviour [1].

- Correct instructions alone do not ensure correct systems.

- Confidence must instead be demonstrated across concurrency, coherence, memory management, and real software execution.

Figure 1 illustrates how the verification scope expands with architectural complexity. It explains why late-stage uncertainty persists when verification remains confined to block-level correctness. Verification assurance progressing from ISA compliance to full system integrity and performance validation.

Figure 1: Layered System-Level Processor Verification [1].

AI in Verification Workflows

From Test Generation to Search, Optimisation, and Explanation

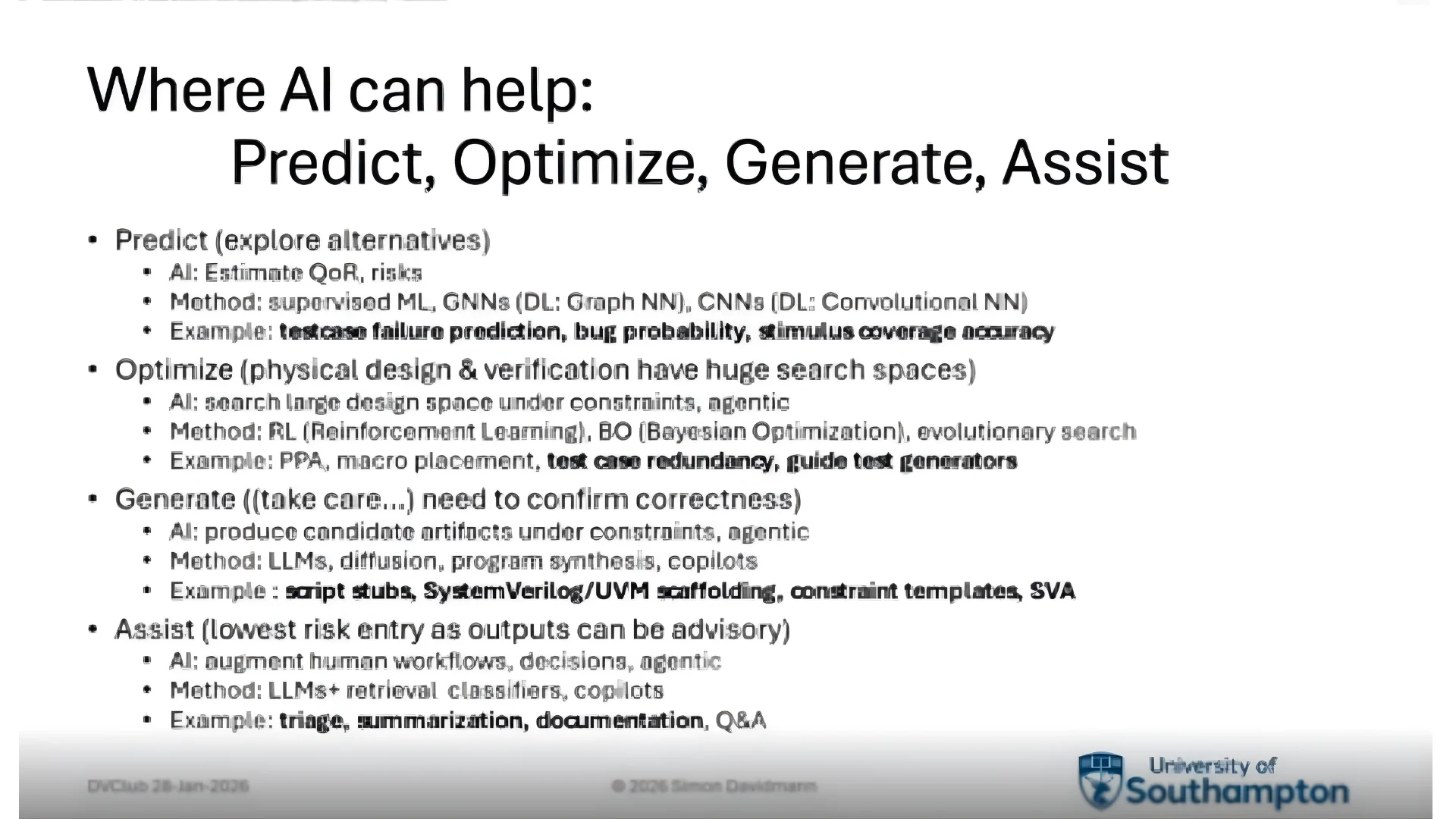

Artificial intelligence is now influencing electronic design automation across prediction, optimisation, generation, and analytical reasoning within verification environments [2]. This shift marks a transition away from simply generating larger regression suites. Future verification effectiveness depends on intelligent exploration of state space, prioritised coverage closure, and automated reasoning about failures. Agent-based AI systems can orchestrate tools, reason about outcomes, and iterate toward verification objectives such as coverage closure or root-cause isolation [2].

Figure 2 highlights augmentation rather than replacement. Engineering judgement remains central while automation accelerates exploration and insight. AI agents coordinating prediction, optimisation, generation, and analysis within DV workflows.

Figure 2: AI-Assisted Verification Decision Loop [2].

RISC-V Application-Class Complexity

Concurrency, Virtualisation, and Interrupt-Driven Behaviour

The transition from controller-class processors to application-class RISC-V cores introduces fundamentally new verification risks. These include weak memory ordering, multi-core concurrency, virtual memory management, and large-scale interrupt behaviour. Such interdependent execution scenarios cannot be exhaustively validated through conventional constrained-random testing alone. AI-assisted reasoning and coverage-directed exploration, therefore, become essential to achieving system confidence [2].

Verification Challenges in Near-Memory AI Architectures

Functional Correctness Meets Data-Centric Compute

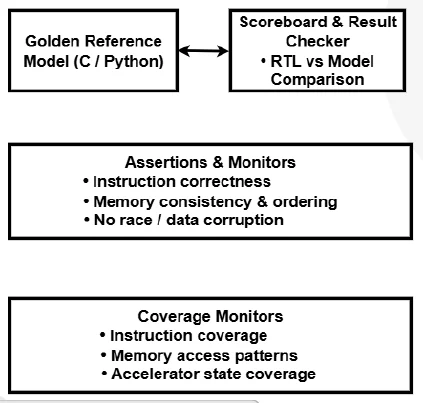

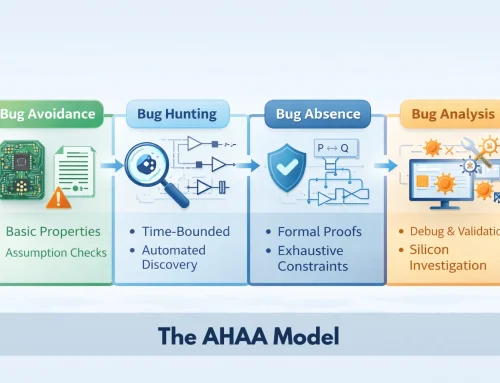

Near-memory AI accelerators reduce data movement and improve efficiency, yet they introduce new verification complexity through custom instructions, parallel execution, and altered memory visibility semantics. Effective verification, therefore, requires hierarchical reference models, assertion-based ordering validation, and coverage-driven exploration of corner cases in parallel execution [3].

Figure 3 demonstrates how verification responsibility spans instruction correctness, memory consistency, accelerator state coverage, and interaction among the processor core, memory subsystem, accelerator, and verification monitors. System-level observability becomes a defining challenge.

Figure 3: Verification View of a Near-Memory RISC-V AI System [3].

Open and Scalable Verification Infrastructure

Accessibility Without Compromising Rigour

DVClub Bristol, January 2026, also highlighted the growing importance of open and scalable verification environments. Python-based testbenches, open-source simulators, and lightweight tooling now allow meaningful verification activity to run on standard development hardware rather than specialised infrastructure.

These approaches reduce entry barriers for startups, research teams, and early-stage programmes while preserving methodological discipline. They also support reproducibility and collaboration across distributed engineering environments, as demonstrated in practical open-source verification workflows presented during the training session [4].

Implications for Future DVClub Cambridge

The Bristol discussions collectively indicate a structural transition in processor verification:

- Verification dominates programme risk and cost.

- System-level assurance replaces block-level correctness.

- AI becomes integral to coverage closure and debug reasoning.

- Data availability and tooling openness shape future productivity.

These themes establish a clear technical agenda for DVClub Cambridge and future verification discourse.

Looking Ahead to DVClub Cambridge

The themes discussed at DVClub Bristol continue in the forthcoming DVClub Cambridge session, focused on RISC-V verification and system-level assurance.

Further details and registration are available here:

👉 https://www.tickettailor.com/events/alpinumconsulting/1990913

For discussion, collaboration, or technical engagement, contact Alpinum Consulting here:

👉 https://alpinumconsulting.com/contact-us/

References

[1] Dave Kelf, A Layered, Graph-based Approach to RISC-V Verification, DVClub Bristol presentation.

[2] Simon Davidmann, “AI and EDA for DV Engineers”, DVClub Bristol, University of Southampton. https://alpinumconsulting.com/wp-content/uploads/Simon-Davidmann-DV-Club-Bristol-AIEDA-Davidmann28-Jan-2026FINAL.pdf

[3] Vinayak V P, Verification Challenges and Strategies for RISC-V-Based Near-Memory AI Accelerators. https://alpinumconsulting.com/wp-content/uploads/Vinayak-VP-RISC-V_Verification.pdf

[4] Andy Bond, Training on AVL and Python-Based Hardware Testbenches, DVClub Bristol video. https://drive.google.com/file/d/1ODvlGF5tyBrpbugAFuNFXNLJRLNRl9ok/view?usp=sharing

Written by : Mike Bartley

Mike started in software testing in 1988 after completing a PhD in Math, moving to semiconductor Design Verification (DV) in 1994, verifying designs (on Silicon and FPGA) going into commercial and safety-related sectors such as mobile phones, automotive, comms, cloud/data servers, and Artificial Intelligence. Mike built and managed state-of-the-art DV teams inside several companies, specialising in CPU verification.

Mike founded and grew a DV services company to 450+ engineers globally, successfully delivering services and solutions to over 50+ clients.

Mike started Alpinum in April 2025 to deliver a range of start-of-the art industry solutions:

Alpinum AI provides tools and automations using Artificial Intelligence to help companies reduce development costs (by up to 90%!) Alpinum Services provides RTL to GDS VLSI services from nearshore and offshore centres in Vietnam, India, Egypt, Eastern Europe, Mexico and Costa Rica. Alpinum Consulting also provides strategic board level consultancy services, helping companies to grow. Alpinum training department provides self-paced, fully online training in System Verilog, UVM Introduction and Advanced, Formal Verification, DV methodologies for SV, UVM, VHDL and OSVVM and CPU/RISC-V. Alpinum Events organises a number of free-to-attend industry events

You can contact Mike (mike@alpinumconsulting.com or +44 7796 307958) or book a meeting with Mike using Calendly (https://calendly.com/mike-alpinum-consulting).

Stay Informed and Stay Ahead

Latest Articles, Guides and News

Explore related insights from Alpinum that dive deeper into design verification challenges, practical solutions, and expert perspectives from across the global engineering landscape.