Introduction

Modern SoCs operate across multiple trust levels. They expose debug and test infrastructure, integrate third-party IP, and rely on complex firmware stacks that can alter the effective security boundary after tape-out. In this environment, the most serious failures often arise from unintended connectivity. An untrusted source influences a protected destination, or secret data reaches an observable interface.

Formal security verification for SoC design directly addresses this class of risk. Rather than asking whether the design behaved correctly for selected test cases, it asks whether any execution can permit an unauthorised flow or influence. This includes rare states, unexpected mode combinations, and corner-case sequencing that simulation cannot exhaustively cover.

Five key learning points

| Key learning point | Link to detailed explanation | External reference link |

| Security failures often come from unintended information flow, not functional bugs | Security is an information-flow problem | [1] |

| Standard SVA struggles to express many security intents; security needs flow-aware properties | Why standard SVA and FPV are not enough | [2] |

| Treat debug ports, test logic, and untrusted IP as explicit sources, then prove they cannot influence protected assets | Define sources and assets, then prove non-interference | [3] |

| Combine formal proofs with RTL taint propagation to find both reachable counterexamples and broader propagation behaviour | Combine formal with RTL taint propagation | [4] |

| Close security verification with objective-driven coverage and documented assumptions, not just “green dashboards” | Closing the loop: security sign-off and completeness | [5] |

Security is an information-flow problem

Security properties at the chip level often reduce to two flow constraints:

- Confidentiality: secret data must not flow to untrusted observation points.

- Integrity: untrusted inputs must not influence trusted assets or decisions.

In practice, security-relevant destinations include external pins, debug outputs, DMA-visible memories, status registers, and sideband interfaces that firmware can expose. Sources include debug scan chains, JTAG, test access ports, wireless or sensor inputs, untrusted IP blocks, and software-controlled registers that can be modified by less-trusted privilege levels.

The difficulty arises because security flows are rarely simple wires. They are often indirect and temporal:

- An untrusted control signal selects between two secure values.

- A secret influences an address, which selects a memory line and alters a bus pattern.

- A value becomes observable only when a ready/valid sequence aligns.

Effective verification must reason about influence across time and across control conditions, not just about value equality in a single cycle.

Why standard SVA and FPV are not enough

Functional properties describe what must occur under intended stimulus and defined operating conditions. Security properties define what must never be possible, including under adversarial sequencing, unexpected mode combinations, or rare state transitions.

A practical limitation appears quickly. Many security objectives are difficult to express directly in standard SVA when the concern is arbitrary data influence and the full reachable state space, rather than a specific scenario. Flow-oriented reasoning and dedicated security analysis are often required to demonstrate that defined sources cannot affect protected sinks under any execution path.

The implication for verification teams is clear. If the verification plan reduces security to a small set of assertions, it will overlook issues that arise from system integration, mode control, and indirect propagation paths.

Formal Security Verification for SoC design in a nutshell

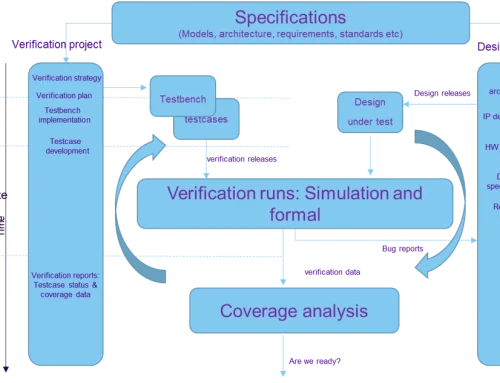

A workable formal security verification flow usually looks like this:

- Inputs: synthesizable RTL, clock/reset definitions, constraints for valid modes and environmental assumptions, plus a set of security properties (some can be generated from a policy model).

- Objective: prove that information from defined sources cannot reach protected assets (confidentiality) and cannot influence protected state or outputs (integrity).

- Debug: when the property fails, the tool should provide a trace that explains how the flow occurs and through which logic cone or state transition.

What to verify at the chip level

A practical starting set of security objectives for SoCs:

- Debug and test access cannot reach secure memories in production mode.

- Secrets (keys, seed material, fuses, secure boot measurements) never reach non-secure outputs or non-secure firmware-visible registers.

- Non-secure software cannot influence secure world configuration, security-critical clocks/resets, or privilege gating.

- Security mode transitions are monotonic and authenticated.

- Isolation boundaries hold across resets, low-power states, and scan/test modes.

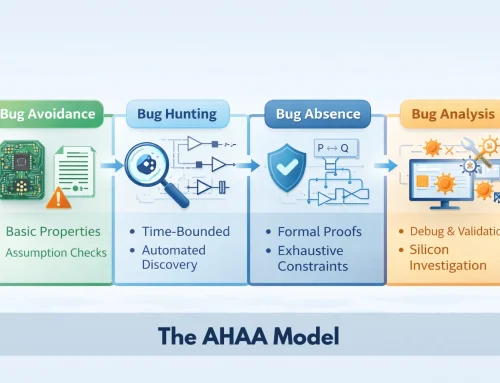

Figure 1: Formal Security Verification workflow at RTL.

Figure 1 illustrates the verification context required for chip-level security analysis. Security verification is not a standalone check. It depends on precise modelling of design intent, operational constraints, and system timing assumptions. Formal Security Verification operates on synthesizable RTL with explicitly defined security properties, environmental constraints, and clock/reset specifications. Together, these define the verification boundary for confidentiality and integrity analysis.

Define sources and assets, then prove non-interference

Security verification becomes tractable when you explicitly label:

- Sources: untrusted or potentially adversarial entry points (for example, scan inputs, JTAG, untrusted IP interfaces).

- Assets: secrets, protected memories, secure configuration registers, secure outputs.

- Allowed paths: legitimate conduits (for example, key material may flow into an encryption block but nowhere else).

From this classification, the core verification objective becomes non-interference. A defined source must not influence a protected asset. A secret must not become observable at an unauthorised interface.

Concrete example pattern: debug port to protected memory

A common integration risk occurs when a production configuration leaves a residual debug path that can influence protected memory or a register file. The correct verification target is not a specific write value. The objective is to prove that JTAG cannot influence Flash contents under any permitted sequence or operating condition.

This abstraction level supports credible SoC security sign-off by capturing unintended connectivity, mode configuration errors, and corner-case enable conditions that functional testing may not expose.

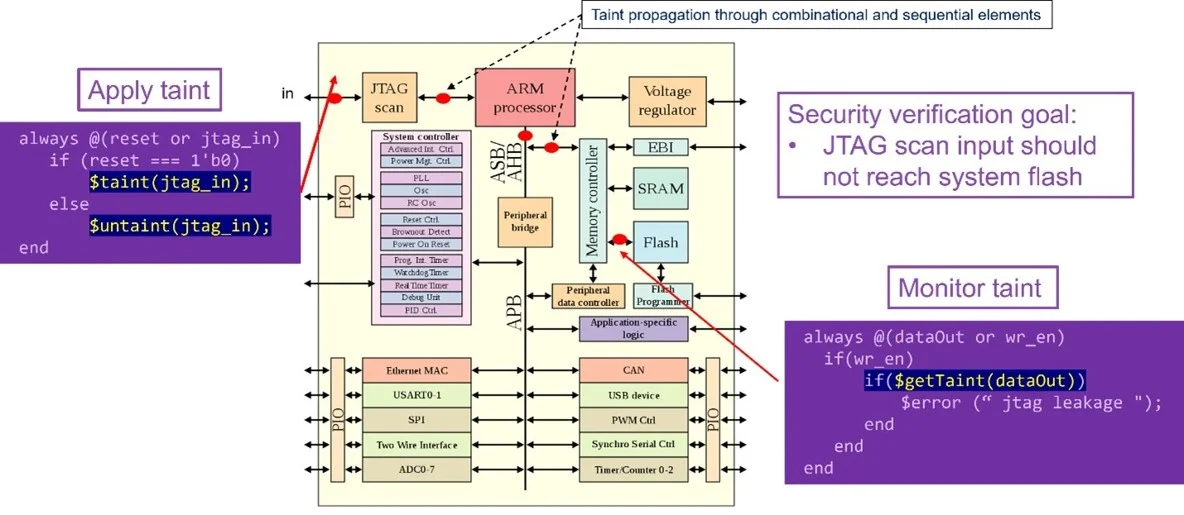

Figure 2: Example of taint propagation from JTAG input across an SoC

Figure 2 illustrates how information-flow security analysis operates at the RTL level. Instead of evaluating specific stimulus values, the verification environment tracks influence from defined sources across the reachable state space and checks that protected assets remain isolated.

In this example, a taint is applied to an untrusted JTAG input and propagated through combinational and sequential logic across the interconnect. The security objective is to prove that the tainted value cannot reach protected Flash memory. Monitoring logic flags a violation if such influence occurs.

Data leakage and key exposure: the hard cases are indirect

Verification teams recognise the need to protect cryptographic keys. However, leakage rarely appears as a direct wire from key storage to an output pin. It typically arises through indirect paths such as:

- complex interconnect, arbitration, and packetisation

- status and ready/valid signalling that encodes information

- debug visibility mechanisms introduced for bring-up

- mixed signals that combine secure and non-secure influence

A robust architectural policy states that key material may flow only through the authorised cryptographic block, and encrypted data may exit only via defined outputs.

This policy can be formalised using information-flow assertions. The objective is to ensure that secret data cannot reach any output except through approved logic. In large designs, this rule becomes impossible to validate solely by visual inspection, particularly when the number of potential endpoints is large.

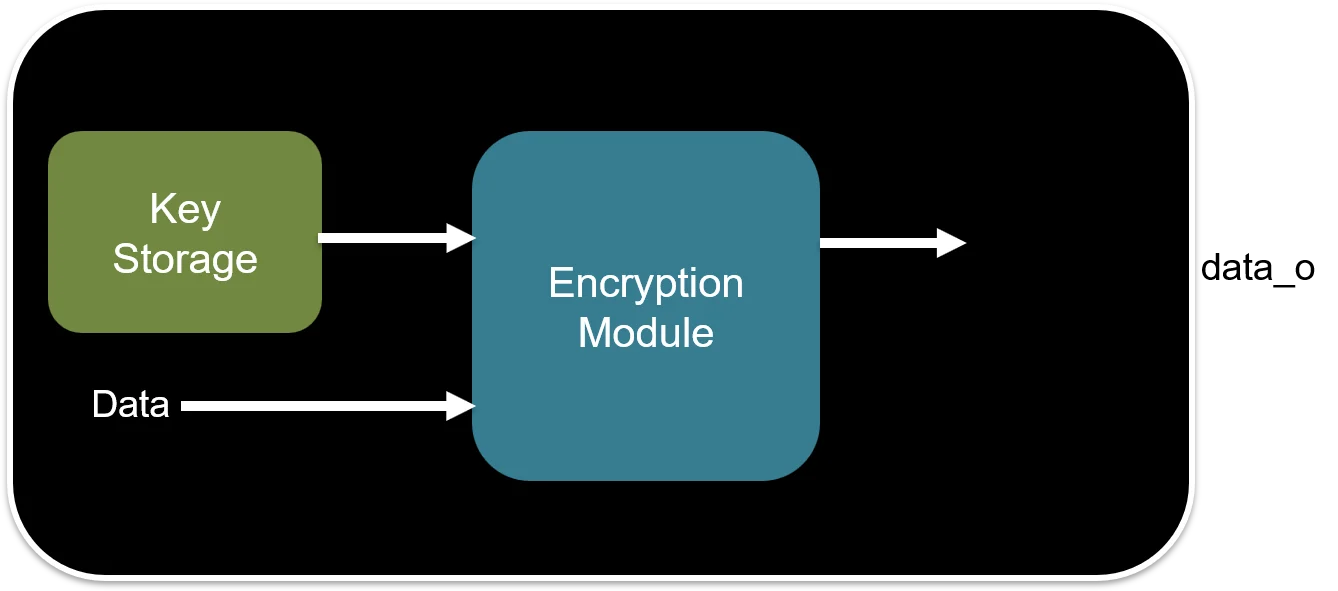

Figure 3: Restricting key propagation to authorised encryption paths

Figure 3 illustrates a focused example of policy enforcement. The verification objective constrains key material to influence outputs only via the authorised encryption module. If the property fails, the tool produces a bounded counterexample trace that identifies the control sequence and logic path responsible for the leakage.

This form of property captures both direct connectivity errors and subtle control-dependent propagation that might otherwise remain undetected.

Combine formal with RTL taint propagation

Formal methods provide exhaustive state-space exploration and generate minimal counterexamples when properties fail. However, security verification benefits from combining formal analysis with taint propagation during RTL simulation.

This combination provides two practical advantages.

- Propagation visibility:

Simulation-based taint tracking shows how far and how long a taint propagates through combinational logic, sequential elements, memories, and interconnect. Engineers can observe influence paths across time, not just single-cycle effects. - Faster debug iteration:

When investigating a suspected leak, taint tracing provides immediate feedback on where influence propagates. This accelerates refinement of formal constraints and security policies.

A combined flow typically follows these steps:

- Inject a taint at the defined untrusted source, or mark secret data as tainted.

- Run RTL simulation with taint tracking enabled to observe propagation through combinational and sequential logic.

- Add monitoring assertions that flag a violation when tainted data reaches a protected destination.

- Use formal analysis to prove that the destination is unreachable from the source under defined constraints and mode assumptions, or generate a counterexample trace if it is reachable.

This approach addresses a common security failure mode: rare, sequencing-dependent leakage that evades directed testing. Simulation alone cannot provide exhaustiveness. Formal analysis alone may struggle if constraints are incomplete or environmental assumptions are poorly defined. Used together, the two methods converge more quickly on actionable root cause evidence.

Constraints, trade-offs, and risk management

Security proofs are only as strong as the modelling decisions behind them. Verification teams should treat the following areas as explicit engineering tasks, not implicit assumptions.

1) Mode and lifecycle modelling

Most SoCs operate across multiple lifecycle states, including bring-up, manufacturing, RMA, and field deployment. A debug port that is legitimate in one state may be prohibited in another. The verification plan must explicitly model these modes and prove the correct security property for each lifecycle state. Failure to separate lifecycle assumptions leads to false confidence.

2) Assumptions and environmental constraints

Formal analysis requires environmental constraints to avoid unrealistic counterexamples. For example, allowing reset to toggle arbitrarily every cycle may create behaviours that never occur in silicon. However, overly restrictive assumptions can hide genuine vulnerabilities. Each assumption must be documented, reviewed, and justified as part of the security argument.

3) Coverage and completeness for security objectives

Security verification requires an objective-driven definition of completeness. Completeness must be defined with respect to a specific security policy, identified assets, defined sources, and operational modes. This is closer to requirements coverage than to pass-or-fail test execution. Without an explicit completeness definition, sign-off remains ambiguous.

4) Cryptography strength versus leakage control

Information-flow security verification does not assess cryptographic strength. It verifies that key material and intermediate values do not propagate through unintended channels. Teams should treat cryptographic correctness and integration-level non-leakage as separate sign-off criteria, each with its own evidence and validation approach.

Closing the loop: security sign-off and completeness

Security verification programmes fail when they cannot explain what “done” means. A more credible approach is:

- Define a security policy model (assets, sources, allowed conduits, forbidden paths).

- Map policy to verification objectives (non-interference checks, key-leakage rules, debug isolation rules).

- Demonstrate closure with:

- proven or bounded properties,

- justified unreachable cases,

- no vacuous properties,

- documented assumptions and waivers,

- and alignment between formal findings and simulation coverage gaps.

This creates decision confidence for programme owners: the team can explain which risks were reduced, which are accepted, and why.

Continue Exploring

If you would like to explore more work in this area, see the related articles in the Verification section on the Alpinum website:

👉 https://alpinumconsulting.com/resources/blogs/verification/

Further Resources:

👉 https://alpinumconsulting.com/services/training/

👉 https://alpinumconsulting.com/services/

References

[1] Hu, W. et al., “Hardware Information Flow Tracking”, ACM Computing Surveys (2021). Available: https://dl.acm.org/doi/10.1145/3447867

[2] NIST, Hardware Security Project (overview and research focus). Available: https://csrc.nist.gov/projects/hardware-security

[3] Arm Developer, “TrustZone technology” (architectural security states and scope). Available: https://developer.arm.com/documentation/100690/latest/

[4] NIST CSRC event material (pre-silicon security verification and information-flow concepts). Available: https://csrc.nist.gov/topics/activities-and-products/reference-materials

[5] Sun, H. et al., “Hardware information flow tracking based on lightweight …” (2024). Available: https://www.sciencedirect.com/science/article/pii/S0167404824003778

Written by : Mike Bartley

Mike started in software testing in 1988 after completing a PhD in Math, moving to semiconductor Design Verification (DV) in 1994, verifying designs (on Silicon and FPGA) going into commercial and safety-related sectors such as mobile phones, automotive, comms, cloud/data servers, and Artificial Intelligence. Mike built and managed state-of-the-art DV teams inside several companies, specialising in CPU verification.

Mike founded and grew a DV services company to 450+ engineers globally, successfully delivering services and solutions to over 50+ clients.

Mike started Alpinum in April 2025 to deliver a range of start-of-the art industry solutions:

Alpinum AI provides tools and automations using Artificial Intelligence to help companies reduce development costs (by up to 90%!) Alpinum Services provides RTL to GDS VLSI services from nearshore and offshore centres in Vietnam, India, Egypt, Eastern Europe, Mexico and Costa Rica. Alpinum Consulting also provides strategic board level consultancy services, helping companies to grow. Alpinum training department provides self-paced, fully online training in System Verilog, UVM Introduction and Advanced, Formal Verification, DV methodologies for SV, UVM, VHDL and OSVVM and CPU/RISC-V. Alpinum Events organises a number of free-to-attend industry events

You can contact Mike (mike@alpinumconsulting.com or +44 7796 307958) or book a meeting with Mike using Calendly (https://calendly.com/mike-alpinum-consulting).

Stay Informed and Stay Ahead

Latest Articles, Guides and News

Explore related insights from Alpinum that dive deeper into design verification challenges, practical solutions, and expert perspectives from across the global engineering landscape.