Introduction

As semiconductor systems increase in size, configurability, and software interaction, verification effort increasingly determines overall programme risk. Simulation remains the dominant verification technique, yet it cannot exhaustively explore design behaviour. Formal verification offers mathematical exhaustiveness, but its application introduces strategic issues that extend far beyond tool selection.

The strategic issues in adopting formal verification sit at the intersection of workforce capability, methodology design, workflow integration, and programme economics. Organisations that treat formal verification as a direct replacement for simulation often encounter stalled proofs, misleading confidence, or unsustainable verification costs. Effective adoption requires deliberate system-level decisions about where, when, and how formal techniques are applied.

Five Key learning points

| Key learning point | Link to detailed explanation | Reference |

| Formal verification adoption is a strategic shift, not a tooling upgrade | Strategic shift from simulation to formal verification | Hany et al. [1] |

| Skills and culture constrain the formal verification scale | Strategic workforce and training constraints | Devarajegowda et al. [2] |

| State-space explosion limits proof scalability | Methodological and technical constraints | Kumar and Simon [3] |

| Formal verification must integrate with simulation | Workflow integration challenges | Hany et al. [1] |

| Economic value depends on selective deployment | Economic and management considerations | ISO 26262 case studies [4] |

Strategic shift from simulation to formal verification

Simulation and formal verification operate on fundamentally different principles. Simulation samples behaviour through stimulus and observation, while formal verification proves properties across all reachable states under defined constraints. This difference changes how progress, confidence, and completeness are measured.

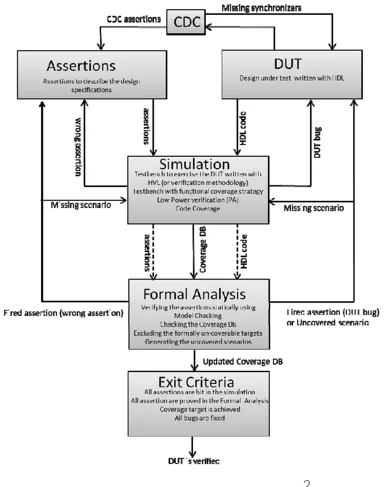

Figure 1: Unified functional verification flow

Figure 1 illustrates a unified functional verification flow in which simulation, assertion-based verification, coverage analysis, and formal verification operate as a coordinated system rather than isolated activities. Assertions are first exercised in simulation to validate correctness, then formally proven using model checking. Formal counterexamples are reused as directed simulation tests, while uncovered coverage points guide targeted formal analysis.

This unified approach addresses the complementary weaknesses of each technique. Simulation alone cannot exhaustively cover all scenarios, while formal verification alone suffers from state-space explosion as design complexity grows [1]. Programmes that fail to adjust verification metrics accordingly often misinterpret formal verification as slow or ineffective, even when it is exposing real design risk.

Strategic workforce and training constraints

Specialised expertise requirement

Formal verification requires skills in temporal logic, abstraction, constraint modelling, and proof interpretation. These differ substantially from stimulus-driven simulation expertise. The limited availability of experienced formal engineers remains a primary barrier to large-scale adoption.

Cultural transition

Writing effective SystemVerilog Assertions requires precision and clear expression of design intent. Teams accustomed to exploratory bug hunting must adapt to proof-oriented thinking, where progress is measured through convergence and property completeness rather than test counts. Early non-converging proofs often trigger resistance when organisational expectations are not aligned.

Organisational impact

When training and mentoring overhead is underestimated, formal verification becomes confined to a small group of experts. This concentration prevents assertion reuse across projects and limits long-term return on investment.

Methodological and technical constraints

State-space explosion

Formal tools explore all reachable states, but the state space grows exponentially with design size. Large datapaths, deep pipelines, and highly configurable logic quickly exceed tractable limits. Industrial experience confirms that full-chip proofs remain impractical without aggressive decomposition [2][3].

Abstraction and partitioning

As design complexity increases, the scalability of formal verification depends on deliberate abstraction and partitioning. Applying formal techniques uniformly across an entire design leads to rapid state-space growth and non-converging proofs. Effective adoption, therefore, requires early decisions about where formal analysis adds value.

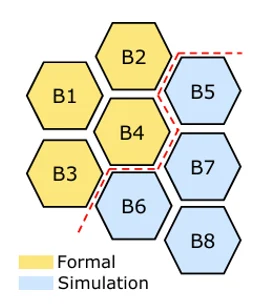

Figure 2 shows a mutually-exclusive deployment model that divides the design into formal-friendly and simulation-friendly regions. Control-dominant logic, interfaces, arbiters, and protocol-handling blocks are well suited to formal verification, while datapath-heavy and arithmetic-intensive logic remains in simulation. This separation constrains state-space growth while preserving system-level coverage intent.

Partitioning is a strategic decision rather than a tooling choice. It relies on architectural understanding, awareness of sequential depth, and clarity of verification objectives. By defining the formal–simulation split early, programmes can scale formal verification without allowing complexity to dominate verification effort.

Figure 2: Formal-friendly versus simulation-friendly design partitioning

Table 1 summarises common block-level characteristics that influence whether formal verification or simulation is the more effective primary technique. Typical classification of formal-friendly and simulation-friendly design blocks used to guide selective formal deployment.

| Formal-friendly blocks | Not ideal for formal verification |

| Control logic, arbiters, bus interfaces | Floating-point units, DSP pipelines |

| Shallow sequential logic | Profound sequential logic |

| Data transport blocks | Arithmetic-intensive datapaths |

Table 1: Typical classification of design blocks by suitability for formal verification

Specification quality risk

Formal verification proves consistency between implementation and specification. Incomplete or incorrect specifications produce false confidence. This risk is particularly acute when assertions are written late or without design ownership.

Constraint balance

Over-constraining hides legal behaviour and masks bugs. Under-constraining leads to non-convergence or spurious counterexamples. Achieving the right balance requires iterative refinement and an understanding of architecture.

Workflow integration from simulation to formal

Integration with development models

Most organisations operate within Agile, CI/CD, or V-model processes optimised for simulation regressions. Formal verification requires earlier RTL stability and explicit environment modelling, which can conflict with late-binding testbench practices.

Formal-ready environments

Formal verification delivers maximum value when assertion development begins alongside RTL development. Effective early adoption requires centralised assertion management, version control discipline, and early architectural alignment.

Complementary deployment

Formal verification rarely replaces simulation. Instead, it targets high-risk logic such as control paths, protocol compliance, clocking behaviour, and safety properties. Simulation continues to validate system-level behaviour, software interaction, and performance scenarios [1].

Economic and management considerations

Upfront investment

Formal verification requires investment in tools, computing resources, and training. These costs are visible early, particularly when proofs require iterative refinement.

Return on investment dynamics

Economic benefits include reduced late-stage defects, fewer silicon respins, and faster debug cycles. Automotive and safety-critical programmes report substantial reductions in post-silicon defects when formal verification is selectively applied to control and safety logic [4].

Ecosystem maturity

The formal verification ecosystem continues to evolve. Heterogeneous architectures, configurable designs, and software-defined hardware introduce modelling challenges. Programme owners must account for tool interoperability, long-term support, and internal portability of verification assets.

Strategic approaches for effective adoption

Strategic adoption requires treating formal verification as a complementary analytical capability rather than a universal replacement for simulation. Programmes that target formal methods at high-risk control logic, protocol handling, and safety-critical behaviour achieve earlier defect discovery without unsustainable proof effort. This selective approach aligns technical feasibility with organisational capacity and long-term return on investment.

- Start with pilot projects focused on high-risk control logic and protocol behaviour

- Introduce formal verification early, once RTL structure stabilises

- Limit scope to problem domains well-suited to exhaustive reasoning

- Build reusable assertions, abstraction models, and coverage intent

When applied deliberately, these practices allow formal verification to reduce verification risk without overwhelming teams or schedules.

Conclusion

The strategic issues in adopting formal verification are shaped less by tool capability and more by how verification is positioned within the programme. Formal methods introduce proof-based reasoning that affects skills, workflows, and confidence metrics, and these changes require deliberate system-level decisions.

When teams selectively apply formal verification, integrate it with simulation, and invest in formal-ready practices early, it becomes a credible way to manage verification risk rather than a source of friction. In this context, formal verification serves as a strategic capability, applied where exhaustive reasoning adds the most value.

Continue Exploring

If you would like to explore more work in this area, see the related articles in the Verification section on the Alpinum website:

👉 https://alpinumconsulting.com/resources/blogs/verification/

For discussion, collaboration, or technical engagement, contact Alpinum Consulting here:

👉 https://alpinumconsulting.com/contact-us/

References

[1] A. Hany, A. Ismail, A. Kamal, M. Badran, Approach for a Unified Functional Verification Flow, Saudi International Electronics, Communications and Photonics Conference, 2013.https://ieeexplore.ieee.org/document/6550753 [2] K. Devarajegowda, J. Vliegen, G. Petrovity, K. Fotouhi, A Mutually-Exclusive Deployment of Formal and Simulation Techniques Using Proof-Core Analysis, DVCon Europe, 2017.

https://www.researchgate.net/publication/321964894_A_Mutually-Exclusive_Deployment_of_Formal_and_Simulation_Techniques_Using_Proof-Core_Analysis [3] A. Kumar, S. Simon, A Semi-Formal Verification Methodology for Efficient Configuration Coverage of Highly Configurable Digital Designs, DVCon USA, 2021.

https://arxiv.org/abs/2405.01572 [4] ISO 26262 Functional Safety Standard and published automotive formal-verification case studies.

https://www.iso.org/standard/68383.html

Written by : Mike Bartley

Mike started in software testing in 1988 after completing a PhD in Math, moving to semiconductor Design Verification (DV) in 1994, verifying designs (on Silicon and FPGA) going into commercial and safety-related sectors such as mobile phones, automotive, comms, cloud/data servers, and Artificial Intelligence. Mike built and managed state-of-the-art DV teams inside several companies, specialising in CPU verification.

Mike founded and grew a DV services company to 450+ engineers globally, successfully delivering services and solutions to over 50+ clients.

Mike started Alpinum in April 2025 to deliver a range of start-of-the art industry solutions:

Alpinum AI provides tools and automations using Artificial Intelligence to help companies reduce development costs (by up to 90%!) Alpinum Services provides RTL to GDS VLSI services from nearshore and offshore centres in Vietnam, India, Egypt, Eastern Europe, Mexico and Costa Rica. Alpinum Consulting also provides strategic board level consultancy services, helping companies to grow. Alpinum training department provides self-paced, fully online training in System Verilog, UVM Introduction and Advanced, Formal Verification, DV methodologies for SV, UVM, VHDL and OSVVM and CPU/RISC-V. Alpinum Events organises a number of free-to-attend industry events

You can contact Mike (mike@alpinumconsulting.com or +44 7796 307958) or book a meeting with Mike using Calendly (https://calendly.com/mike-alpinumconsulting).

Stay Informed and Stay Ahead

Latest Articles, Guides and News

Explore related insights from Alpinum that dive deeper into design verification challenges, practical solutions, and expert perspectives from across the global engineering landscape.