Introduction

RISC-V verification challenges rarely come from the ISA alone. Most lost time stems from interactions among specification choices, reference models, stimulus quality, debug visibility, and system integration. The teams that slip usually do not fail because they lack tests. They slip because they discover too late that their tests are answering the wrong question.

This matters more in RISC-V than in many fixed-ISA programmes because teams can choose profiles, privilege modes, debug features, optional extensions, and custom instructions with much greater freedom. That flexibility is one of RISC-V’s strengths, but it also means verification scope can drift unless the project defines exactly what the core must implement, how it will be observed, and which behaviours count as sign-off evidence. RISC-V International’s ratified specifications and profiles clarify the baseline, but they do not eliminate the need for disciplined interpretation in a real project.

Alpinum already has a strong RISC-V content base around formal verification, functional coverage, test generation, and lockstep co-simulation. The purpose of this article is different. It addresses the programme-level failure points that cause avoidable schedule loss across those technical areas. The existing sitemap confirms that those supporting pages are already available for internal cluster building.

Key Learning Points

| Key learning point | Link to detailed explanation | External reference link |

| Projects lose time early when the ISA scope, profiles, and custom extensions are not frozen tightly enough | ISA scope drift starts earlier than most teams expect | [1], [2] |

| Architectural tests help, but they do not replace a real verification plan or a trusted reference strategy | Certification is not verification, and reference model gaps cost time | [3], [4], [5] |

| Random instruction generation without a clear coverage model creates activity, not confidence | Test generation without coverage intent creates motion, not closure | [4], [6], [7] |

| Lockstep, debug, and trace infrastructure needs to be in place before failures become hard to interpret | Lockstep, trace, and debug are often added too late | [8], [9] |

| System integration and software-facing behaviour expose issues that unit-level CPU verification does not see | SoC integration and software-facing behaviour arrive late | [2], [8], [9] |

RISC-V verification challenges: the five places projects lose weeks

1. ISA scope drift starts earlier than most teams expect

The first delay often appears before regression begins. Teams say they are verifying “a RISC-V core”, but they have not frozen the exact instruction subset, privilege behaviour, debug expectations, CSR set, profiles, or the treatment of custom extensions. That sounds manageable until the reference model, compiler assumptions, architectural tests, and UVM environment all make slightly different assumptions. Then every failure becomes ambiguous.

RISC-V’s ratified specifications and profiles are designed to reduce this ambiguity. They give projects a stable definition of standardised behaviour and a clearer way to communicate what a core claim to support. But the presence of a published spec does not by itself produce a project-ready verification target. Teams still need a written implementation contract that spells out which extensions are present, which version matters, which CSRs are implemented, what happens on illegal instructions, and how custom instructions interact with decode, privilege, trap handling, and software expectations.

The prevention is straightforward. Freeze the verification envelope early. Treat the ISA manual, profiles, and implementation-specific behaviour as separate inputs to the plan. If the design includes custom extensions, document them in the same discipline as standard instructions. If the project does not do this, engineers lose weeks later debating whether a failing test exposes a bug, a spec gap, or an unstated assumption.

2. Certification is not verification, and reference model gaps cost time

The second-place projects lose weeks at the reference layer. Many teams assume that once they have architectural tests or compliance-style results, they have covered the core. They have not. The RISC-V Architectural Certification Tests explicitly state that they are certification tests and that additional verification should be run on all processors. That warning matters because architectural tests prove conformance on defined behaviours. They do not replace microarchitectural stress, pipeline hazard exploration, interrupt timing stress, performance corner cases, or SoC-level interaction.

This is where reference model strategy becomes critical. A good RISC-V verification flow separates at least three things: the architectural baseline, the executable or formal model used for comparison, and the dynamic environment used to generate and check stimulus. The Sail RISC-V model provides an official formal specification of the architecture, while frameworks such as RISCOF and riscv-arch-test help run architectural tests consistently. That is useful, but teams still need to decide where lockstep comparison fits, how custom instructions are modelled, and which behaviours belong in directed tests, constrained-random regressions, or formal properties.

Projects lose time when they discover late that the model used by one part of the team is not the model assumed by another. The fix is to establish a single reference strategy at the start: which model is authoritative for ISA intent, which infrastructure runs architectural tests, and which checker path is used in dynamic regressions. Without that, failures become arguments about tooling rather than decisions about correctness.

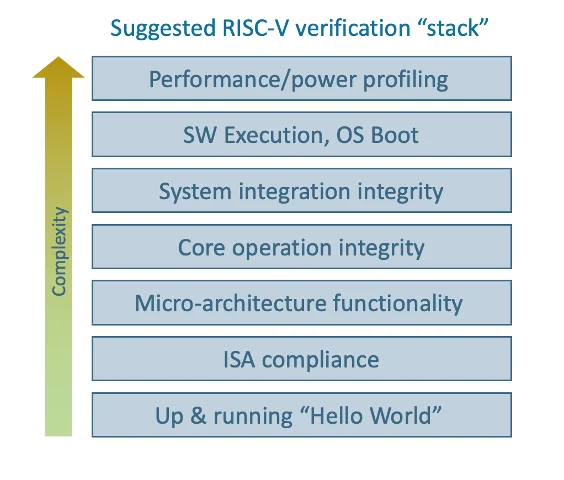

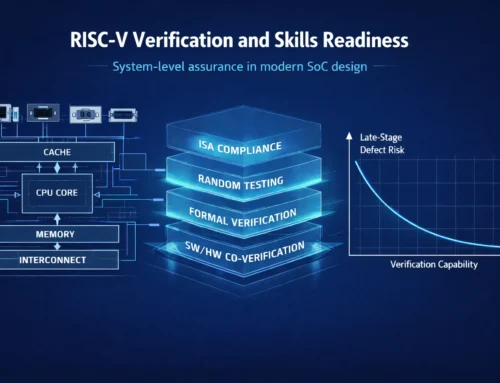

Figure 1: RISC-V verification stack from specification to sign-off. Source:semiwiki

Figure 1 illustrates how RISC-V verification expands from basic functional correctness to full system validation. Early stages focus on ISA compliance and micro-architectural behaviour. As the design matures, verification must extend into core operation, system integration, and software execution. The final stages validate performance and system-level behaviour under real workloads. Projects lose time when these layers are treated as a single activity rather than a structured progression. Each layer introduces new assumptions, interfaces, and failure modes that must be verified independently before moving upward.

3. Test generation without coverage intent creates motion, not closure

The third loss point is stimulus. RISC-V teams often adopt random instruction generation early, which is sensible. The riscv-dv project provides the community with a SystemVerilog/UVM-based random instruction generator for processor verification, and it has become a practical building block across many environments. But random instruction generation is only effective when tied to a real coverage strategy. Otherwise, the project accumulates activity, waveform volume, and failure logs without a proportional increase in confidence.

This is especially visible in cores with privilege transitions, debug entry, exceptions, interrupts, CSRs, PMP-like protection features, or custom instructions. Random tests can hit instruction combinations, but they do not automatically prove that the project has exercised meaningful state transitions or software-facing corner cases. Teams often discover too late that they have plenty of instruction traffic but weak evidence for trap behaviour, retirement correctness, or corner-case sequencing.

The better approach is to connect instruction generation directly to a coverage model that reflects the core’s real risk areas. The prevention is simple but often skipped. Define the coverage question before scaling regressions. Decide which instruction classes, privilege flows, debug entries, CSR interactions, and exception paths must be observed. Then make random generation serve those outcomes rather than replacing them.

4. Lockstep, trace, and debug are often added too late

The fourth delay occurs when the project finally encounters real failures but lacks the observability needed to interpret them quickly. RISC-V offers standardised debug support, and the Debug Specification exists precisely because hardware debug needs a common architecture across diverse implementations. Yet many teams still bring up lockstep comparison, trace hooks, or debug-mode validation too late. They focus first on instruction execution and only later ask whether they can efficiently localise a divergence.

That is costly. As regressions grow and SoC interactions increase, a missing retirement comparator, poor trace visibility, or incomplete debug-state checking can turn a one-day bug into a one-week investigation. This is why lockstep co-simulation matters operationally, not just academically. A good RISC-V lockstep co-simulation, retirement step, and compare flow narrow the point of divergence, making debugging scalable.

The prevention is to build observability into the verification architecture, not as a later enhancement. Decide early how the project will compare retired instructions, how debug mode will be entered and observed, how CSRs and memory accesses will be checked, and what trace data is required for root-cause analysis. If that work waits until failures become expensive, the schedule loss is predictable.

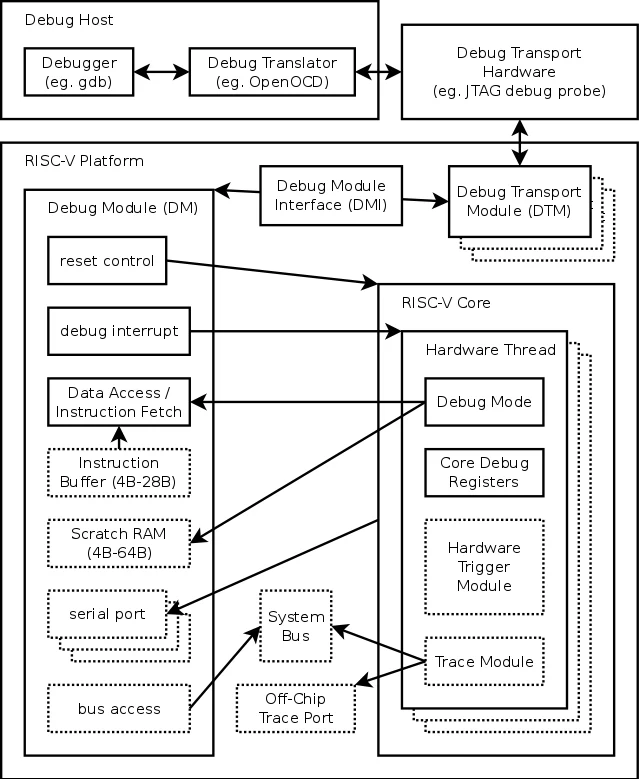

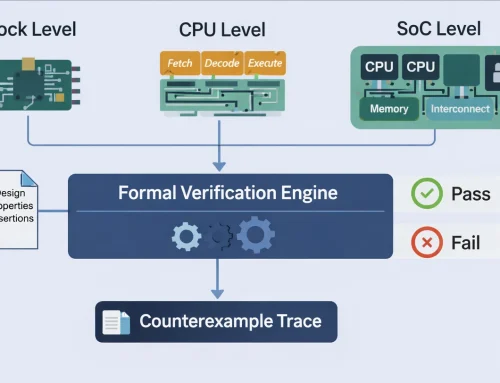

Figure 2: Lockstep and debug observability in a RISC-V verification flow. Source:sifive.github

Figure 2 illustrates how lockstep comparison and debug infrastructure work together to make failures observable at the point of divergence. The DUT, reference model, and checker path must remain aligned on what constitutes architectural state and when comparison is valid, typically at instruction retirement. The diagram highlights the flow of execution, monitoring, and debug signals required to correlate DUT behaviour with the reference model in real time. When this alignment is missing, teams lose time determining whether a mismatch reflects a genuine design defect, a modelling inconsistency, or an observability gap. Effective lockstep verification, therefore, depends not only on comparison logic but also on a well-defined debug and trace strategy that provides sufficient visibility into internal state, control flow, and exception-handling paths.

5. SoC integration and software-facing behaviour arrive late

The fifth-place projects lose weeks when they treat CPU verification as if it ends at the core boundary. It does not. RISC-V cores live inside systems that include interconnect, memory maps, interrupt controllers, boot paths, firmware assumptions, and software toolchains. OpenHW’s verification strategy for CORE-V makes this point clearly by framing industrial-grade pre-silicon verification as more than isolated core stimulus. It includes environment architecture, planning, requirements, simulation infrastructure, and execution context.

Late-stage issues often appear in interrupt sequencing, reset behaviour, privilege transitions, debug handoff, memory consistency assumptions, or interactions between software and custom instructions. Teams that only verify instruction semantics at the unit level can miss exactly the kinds of behaviours that software and integration expose first.

The prevention is to pull software-facing verification earlier. Run system-oriented scenarios before the project believes the CPU is “basically done”. Define what must be true at boot, trap entry, interrupt response, debug attach, and memory-system interaction. If those checks are deferred until integration, the project usually discovers that apparently isolated CPU correctness does not yet equate to product readiness.

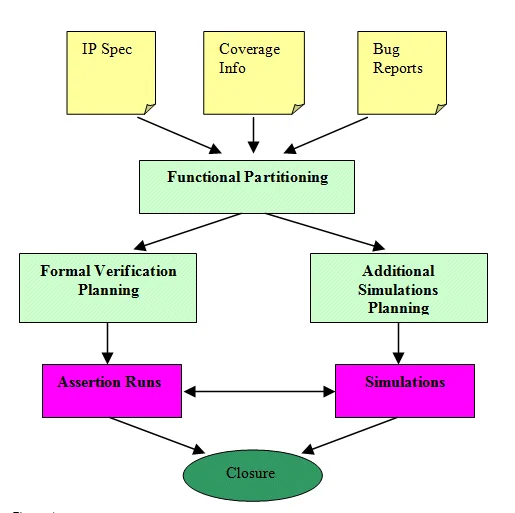

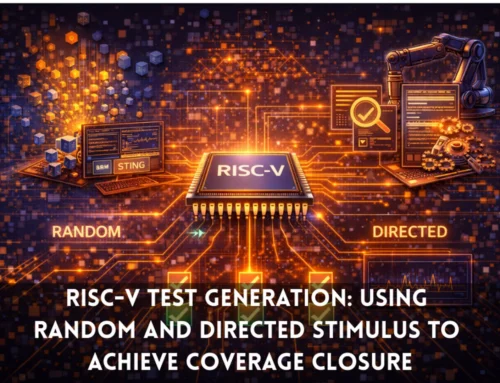

Figure 3: Coverage closure loop for RISC-V verification. Source:design-reuse

Figure 3 shows that coverage closure in RISC-V verification is achieved through an iterative combination of complementary methods rather than a single approach. Architectural tests establish baseline ISA correctness, constrained-random generation explores instruction combinations, and formal verification targets hard-to-reach conditions. Lockstep comparison helps isolate divergence, while software-driven scenarios expose system-level behaviour. Projects lose time when they rely on a single method rather than integrating multiple methods into a structured, coverage-driven loop.

How to prevent it: a disciplined RISC-V verification flow

A disciplined RISC-V verification flow does five things early. It freezes scope, defines the reference strategy, ties generation to coverage, builds observability into the environment, and brings software-facing scenarios to the fore. None of those steps is exotic. The problem is usually not a technical possibility. It is project timing.

Applying these lessons in RISC-V programmes

Teams evaluating or tightening a RISC-V verification flow often require more than isolated techniques. A robust approach connects ISA interpretation, coverage intent, debug observability, and SoC-level execution into a coherent path toward sign-off. This alignment ensures that verification evidence remains consistent from early architectural validation through to system-level behaviour.

For a deeper technical view of how these elements are applied in practice, Alpinum’s RISC-V Verification Training provides structured coverage of verification planning, reference models, coverage strategies, and debug methodologies within real engineering workflows:

https://alpinumconsulting.com/services/training/riscv-verification-training/

Further detail on broader verification architecture, including methodology selection, environment design, and integration at the system level, is available within Alpinum’s Design Verification services:

https://alpinumconsulting.com/services/designverification/

These resources provide a practical foundation for teams aiming to implement a disciplined, coverage-driven RISC-V verification strategy aligned with programme-level delivery requirements.

References

[1] RISC-V International, “Ratified Specifications,” 2026. Available: RISC-V International specifications library.

[2] RISC-V International, “RISC-V Profiles,” 2026. Available: official RISC-V Profiles documentation.

[3] RISC-V, “riscv-arch-test: RISC-V Architectural Certification Tests,” GitHub, 2026.

[4] CHIPS Alliance, “riscv-dv: Random instruction generator for RISC-V processor verification,” GitHub, 2026.

[5] riscv-software-src, “RISCOF: RISC-V Architectural Test Framework,” GitHub, 2026.

[6] RISC-V International, “The RISC-V Instruction Set Manual, Volume I,” 2026.

[7] YosysHQ, “riscv-formal: RISC-V Formal Verification Framework,” GitHub, 2026.

[8] RISC-V International, “The RISC-V Debug Specification,” 2025.

[9] OpenHW Group, “CORE-V Verification Strategy,” 2026.

Written by : Mike Bartley

Mike started in software testing in 1988 after completing a PhD in Math, moving to semiconductor Design Verification (DV) in 1994, verifying designs (on Silicon and FPGA) going into commercial and safety-related sectors such as mobile phones, automotive, comms, cloud/data servers, and Artificial Intelligence. Mike built and managed state-of-the-art DV teams inside several companies, specialising in CPU verification.

Mike founded and grew a DV services company to 450+ engineers globally, successfully delivering services and solutions to over 50+ clients.

Mike started Alpinum in April 2025 to deliver a range of start-of-the art industry solutions:

Alpinum AI provides tools and automations using Artificial Intelligence to help companies reduce development costs (by up to 90%!) Alpinum Services provides RTL to GDS VLSI services from nearshore and offshore centres in Vietnam, India, Egypt, Eastern Europe, Mexico and Costa Rica. Alpinum Consulting also provides strategic board level consultancy services, helping companies to grow. Alpinum training department provides self-paced, fully online training in System Verilog, UVM Introduction and Advanced, Formal Verification, DV methodologies for SV, UVM, VHDL and OSVVM and CPU/RISC-V. Alpinum Events organises a number of free-to-attend industry events

You can contact Mike (mike@alpinumconsulting.com or +44 7796 307958) or book a meeting with Mike using Calendly (https://calendly.com/mike-alpinum-consulting).

Stay Informed and Stay Ahead

Latest Articles, Guides and News

Explore related insights from Alpinum that dive deeper into design verification challenges, practical solutions, and expert perspectives from across the global engineering landscape.