Introduction

Artificial intelligence has largely evolved in digital environments, where models process structured inputs and generate outputs without directly interacting with the physical world. This paradigm is shifting. The emergence of Physical AI architecture, NVIDIA introduces systems that must perceive, reason, and act within real-world constraints such as latency, uncertainty, and physics.

Unlike conventional AI systems, physical AI must integrate sensing, decision-making, and actuation within bounded compute and energy envelopes. This introduces system-level challenges across training, validation, and deployment. The transition is not incremental. It requires rethinking how AI systems are designed, verified, and operated at scale.

NVIDIA proposes a structured approach to this problem through a layered architecture that connects large-scale training, physics-based simulation, and embedded runtime execution.

Key Learning Points

| Key learning point | Link to detailed explanation | External reference |

| Physical AI extends AI from digital to embodied systems | From Digital Models to Physical AI Systems | [1] |

| The three-computer architecture structures development and deployment | Physical AI architecture NVIDIA: Three-Computer Solution | [1] |

| Simulation-first workflows reduce deployment risk | Simulation-First Development and Digital Twins | [2] |

| World foundation models enable environment reasoning | World Foundation Models and the Role of Cosmos | [4] |

| System-level constraints define scalability and reliability | System-Level Constraints, Trade-offs, and Risks | [2],[3] |

From Digital Models to Physical AI Systems

Traditional AI operates in controlled environments with curated data and static evaluation. Physical AI systems do not. They must function in dynamic, partially observable environments where uncertainty, noise, and real-world variability dominate.

Systems such as robots and autonomous platforms rely on continuous sensor fusion and operate under non-deterministic conditions shaped by external factors and physical interactions. At the same time, they are constrained by latency, energy limits, and mechanical realities, with safety-critical implications.

This shift introduces closed-loop intelligence, where perception, planning, and action continuously interact. Performance depends not only on model accuracy, but on stable system behaviour under changing conditions.

As a result, physical AI is fundamentally a system integration problem. Reliability emerges from the coordination of sensing, decision-making, control, and hardware — not from isolated models. This marks a clear transition from digital AI to embodied, real-world systems.

Physical AI architecture NVIDIA: Three-Computer Solution

NVIDIA defines a structured approach known as the three-computer solution, which separates concerns throughout the AI system development lifecycle.

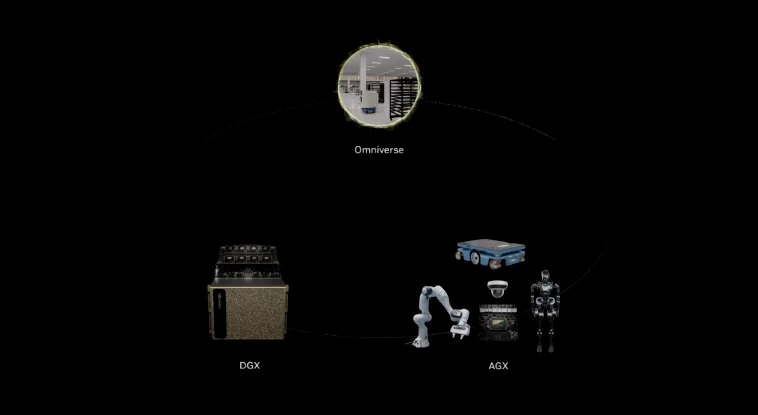

Figure 1: The separation between training, simulation, and deployment environments, enabling controlled iteration and validation before real-world execution. source: sysgen.de

Figure 1 shows how NVIDIA structures Physical AI into three distinct compute domains. The training layer focuses on large-scale model development using DGX systems. The simulation layer uses Omniverse to validate behaviour within physics-based digital twins. The deployment layer executes optimised models on Jetson and DRIVE platforms under real-time and power constraints. This separation enables independent optimisation of each stage while maintaining system-level consistency across the lifecycle.

The architecture consists of three distinct compute layers:

1. Training Systems (NVIDIA DGX)

Large-scale compute platforms are used to train foundation models. These models learn:

- Object recognition

- Scene understanding

- Action planning

Training relies on large datasets and distributed computing. The goal is to produce generalisable representations rather than task-specific rules.

2. Simulation Systems (NVIDIA Omniverse)

Simulation provides a controlled environment for validation. Digital twins replicate real-world systems with physics fidelity.

This allows:

- Scenario testing at scale

- Edge-case exploration

- Performance validation before deployment

Simulation is not optional. It is required to achieve acceptable risk levels in physical systems.

3. Runtime Systems (Jetson and DRIVE)

Deployment occurs on constrained edge platforms. These systems must:

- Execute inference in real time

- Operate within power limits

- Maintain deterministic behaviour

This separation ensures that each stage is optimised for its constraints.

Simulation-First Development and Digital Twins

A defining characteristic of physical AI is the adoption of simulation-first workflows, where systems are designed, trained, and validated in virtual environments before any real-world deployment. This approach addresses the inherent risks of operating in physical domains by shifting early-stage experimentation and verification into controlled, repeatable settings.

This approach aligns with established practices in simulation-driven validation in automotive systems, where virtual environments are used to reduce risk before real-world deployment.

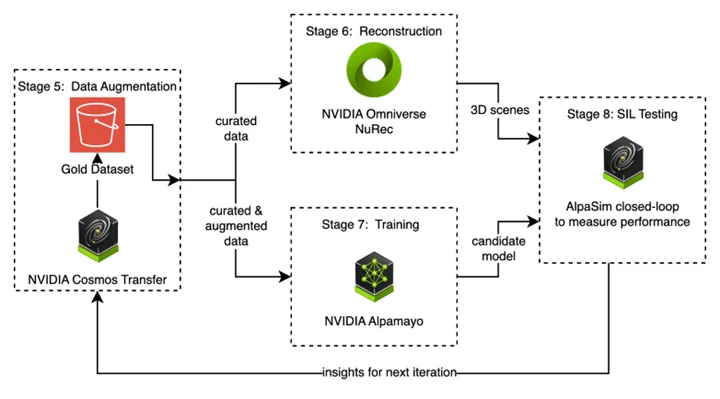

Figure 2: Simulation environments enable iterative validation before deployment, reducing risk and improving system robustness. Source: Aws.amazon

Figure 2 shows how simulation environments support iterative validation prior to deployment, enabling engineers to refine system behaviour under a wide range of conditions. Digital twins are constructed using real-world or synthetic data, creating virtual representations of physical systems that capture geometry, dynamics, and environmental interactions. Within platforms such as Omniverse, these environments are used to reconstruct scenarios, generate variations, and evaluate system responses under controlled conditions.

Simulation operates as a closed-loop process. Models are trained on synthetic data, validated across varied scenarios, and continuously refined based on performance feedback. This allows exposure to rare or hazardous conditions that are impractical to reproduce physically, while supporting regression testing and safety validation.

However, simulation introduces key trade-offs. High-fidelity environments improve realism but increase computational cost, limiting scalability. Lower-fidelity models risk missing critical dynamics. In addition, the simulation-to-reality gap remains a central challenge. Differences in sensors, environments, and physical interactions can lead to discrepancies in real-world performance.

Bridging this gap requires calibration, domain randomisation, and validation against real-world data. Simulation alone cannot guarantee coverage of all conditions, making hybrid validation strategies essential. Reliable deployment, therefore, depends on aligning simulation fidelity, data generation, and validation processes to ensure consistent behaviour beyond the virtual environment.

World Foundation Models and the Role of Cosmos

NVIDIA introduces Cosmos, an open platform built around world foundation models (WFMs). These models differ from traditional AI models in that they attempt to model environments rather than isolated tasks.

WFMs support:

- Predictive environment generation

- Vision-language reasoning

- Action simulation grounded in physics

This enables systems to:

- Anticipate outcomes

- Generalise across environments

- Reduce reliance on labelled datasets

The approach aligns with a shift from supervised learning to world modelling.

As described in recent developments in the ecosystem, these models are being used to generate synthetic environments and validate robotic behaviour at scale.

Robotics and Embodied Intelligence

Physical AI is realised in robotic systems, where perception, decision-making, and control operate as a closed loop within real-world constraints. Unlike static AI, these systems must continuously sense, plan, and act under strict timing constraints, safety requirements, and environmental variability.

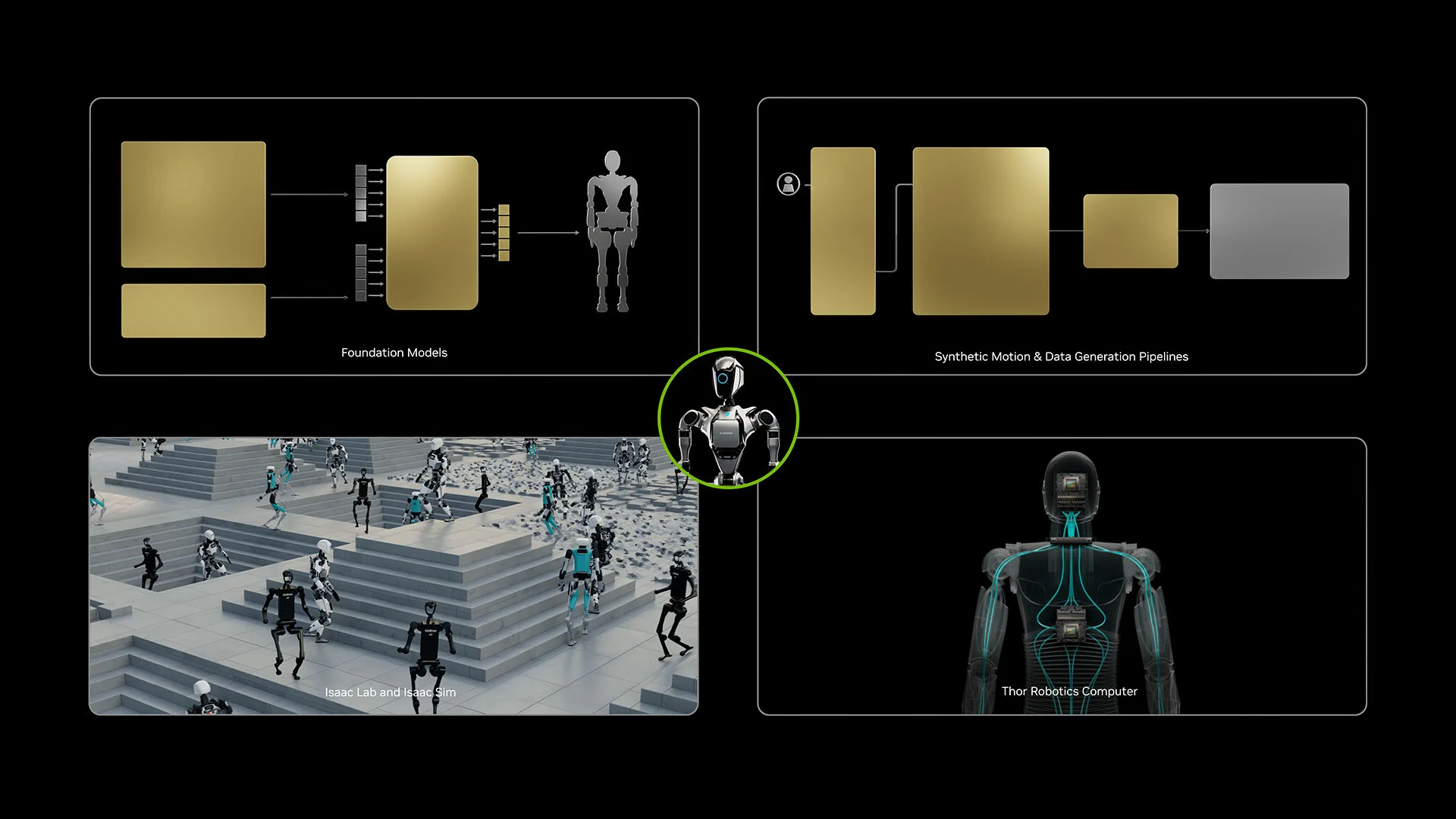

Figure 3: Robots are trained in simulation environments before transitioning to real-world deployment. Source: developer.nvidia

Figure 3 shows the simulation-to-real pipeline, where robots are trained in virtual environments before deployment. Synthetic scenarios enable large-scale development and validation of perception models, motion policies, and task execution strategies without the cost and limitations of physical testing.

Simulation-driven training, often using reinforcement learning, allows robots to acquire capabilities such as manipulation, navigation, and multi-step task execution while improving generalisation across varied conditions. Frameworks such as Isaac Lab and GR00T accelerate this process through parallel simulation, enabling rapid iteration and scalable policy learning.

However, transferring models from simulation to real systems introduces additional constraints. Sensor noise, actuation delays, and hardware limitations affect performance and must be addressed through calibration and domain randomisation.

Simulation-first pipelines reduce reliance on physical trial-and-error, but deployment success depends on how well learned behaviours generalise under real-world variability. Reliable robotics systems, therefore, require tight alignment between training environments and operational conditions to ensure stable performance across perception, control, and system integration.

System-Level Constraints, Trade-offs, and Risks

Physical AI introduces constraints that are not present in purely digital systems.

- Latency and Determinism: Real-time systems require bounded response times. Variability in inference latency can lead to system instability.

- Energy and Compute Constraints: Edge devices must balance performance, power consumption, and thermal limits. This constrains model size and complexity, particularly in edge AI deployment on constrained hardware platforms

- Safety and Verification: Physical systems operate in safety-critical environments. Verification must consider edge cases, failure modes, and human interactions. Simulation helps but does not eliminate risk, requiring formal verification approaches in modern chip design

- Data and Generalisation: Real-world environments are diverse. Models must generalise beyond training data. Overfitting to simulation or synthetic data introduces deployment risk, highlighting the need for robust embedded software validation in real-world systems

- Integration Complexity: Physical AI systems require integration across sensors, compute platforms, and control systems. Failures often occur at interfaces rather than within models.

Industry Impact and Adoption Patterns

Physical AI is already influencing multiple domains:

Industrial Automation

Digital twins enable optimisation of production lines before deployment. This reduces commissioning time and improves throughput.

Autonomous Systems

Vehicles and mobile robots rely on real-time perception and planning. Simulation enables validation across diverse scenarios.

Healthcare Robotics

Surgical systems require precision and reliability. Simulation supports validation under controlled conditions before clinical use.

Ecosystem adoption indicates a shift towards integrated platforms that combine training, simulation, and deployment workflows.

Conclusion

Physical AI represents a transition from isolated intelligence to embodied systems operating in dynamic environments. The Physical AI architecture NVIDIA provides a structured approach to managing this complexity through the separation of training, simulation, and deployment.

The key challenge is not model accuracy alone. It is system reliability under real-world constraints.

Simulation-first workflows, world foundation models, and edge deployment platforms collectively address parts of this challenge. However, integration, verification, and generalisation remain open engineering problems.

The transition to physical AI will depend on the ability to manage these system-level trade-offs with confidence.

To understand how AI is evolving from models to real-world systems, explore related analysis in our AI & ML overview series:

👉https://alpinumconsulting.com/resources/blog/ai-ml-overview/

References

[1] NVIDIA Physical AI and Robotics Ecosystem Overview (GTC)

https://www.nvidia.com/en-us/gtc/

[2] NVIDIA Omniverse and Digital Twin Simulation Documentation

https://developer.nvidia.com/nvidia-omniverse-platform

[3] NVIDIA Isaac Robotics Platform and Simulation Frameworks

https://developer.nvidia.com/isaac

[4] NVIDIA Cosmos World Foundation Models Overview

https://www.nvidia.com/en-us/ai/cosmos/

Written by : Mike Bartley

Mike started in software testing in 1988 after completing a PhD in Math, moving to semiconductor Design Verification (DV) in 1994, verifying designs (on Silicon and FPGA) going into commercial and safety-related sectors such as mobile phones, automotive, comms, cloud/data servers, and Artificial Intelligence. Mike built and managed state-of-the-art DV teams inside several companies, specialising in CPU verification.

Mike founded and grew a DV services company to 450+ engineers globally, successfully delivering services and solutions to over 50+ clients.

Mike started Alpinum in April 2025 to deliver a range of start-of-the art industry solutions:

Alpinum AI provides tools and automations using Artificial Intelligence to help companies reduce development costs (by up to 90%!) Alpinum Services provides RTL to GDS VLSI services from nearshore and offshore centres in Vietnam, India, Egypt, Eastern Europe, Mexico and Costa Rica. Alpinum Consulting also provides strategic board level consultancy services, helping companies to grow. Alpinum training department provides self-paced, fully online training in System Verilog, UVM Introduction and Advanced, Formal Verification, DV methodologies for SV, UVM, VHDL and OSVVM and CPU/RISC-V. Alpinum Events organises a number of free-to-attend industry events

You can contact Mike (mike@alpinumconsulting.com or +44 7796 307958) or book a meeting with Mike using Calendly (https://calendly.com/mike-alpinum-consulting).

Stay Informed and Stay Ahead

Latest Articles, Guides and News

Explore related insights from Alpinum that dive deeper into design verification challenges, practical solutions, and expert perspectives from across the global engineering landscape.