Counterfeiting is often treated as a packaging, labelling, or enforcement problem. In practice, it is also a systems problem. A product identity must survive manufacturing, distribution, inspection, hostile attempts at copying, and repeated field verification. If the identifier can be copied, detached, simulated, or reused, the authentication system inherits that weakness.

This is why quantum IDs for anti-counterfeiting deserve attention from engineers, system architects, and technical decision-makers. The core idea is not to add another visible mark to a product. It is to give each physical object or label a measurable identity that comes from microscopic physical behaviour and is therefore difficult to reproduce deliberately.

At the Verification & Semiconductors Futures Conference UK 2026, Prof. Rob Young, Chief Scientist and Founder of Quantum Base, will present “You can’t copy this: Quantum IDs and the end of counterfeiting” under the Startups and Breakthrough Technologies track. The session will examine how Quantum IDs, or Q-IDs, use physical, quantum-derived fingerprints that can be embedded into products and verified with standard hardware. It positions quantum technology not as an abstract research topic but as a practical route towards stronger product authentication and supply chain security.

The engineering question is clear: how can a physical identity system move from deep physics into a scalable authentication workflow without becoming fragile, expensive, or operationally difficult?

Image Source: lancaster.ac.uk

Why does anti-counterfeiting need physical identity

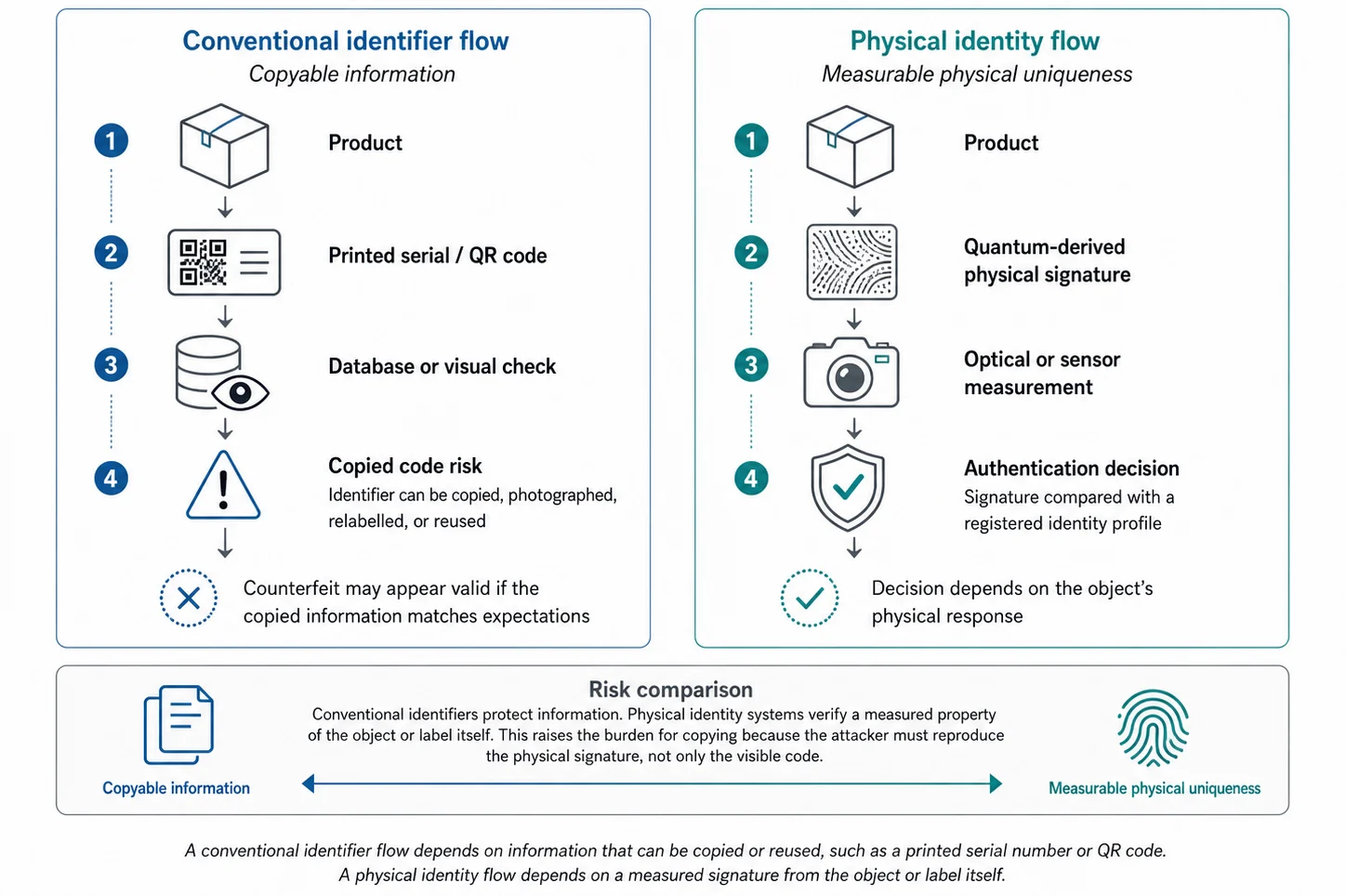

Traditional anti-counterfeiting methods often rely on visible marks, serial numbers, QR codes, holograms, tamper-evident packaging, or database-backed identifiers. These methods can still support traceability, but many depend on information that can be copied.

A serial number can be duplicated. A QR code can be photographed and reused. A visible label can be reproduced with enough effort. Even database checks can fail if a copied identifier appears plausible and inspection processes vary across markets.

A physical identity changes the model. Instead of asking only whether a printed code matches a record, the system asks whether the product or label itself carries a unique measurable signature. This moves authentication closer to the object and makes copying harder because the attacker must reproduce the physical response rather than just the visible information.

What makes quantum IDs different

Quantum IDs use physical uniqueness as the trust anchor. Rather than relying solely on a printed identifier, Q-IDs use quantum-derived physical fingerprints that can be embedded in products and verified through a process.

For product authentication, this matters because identity becomes tied to measurable physical behaviour. In a conventional identifier system, the visible code carries most of the trust. In a quantum ID system, the physical signature becomes part of the trust model.

This does not mean any system becomes immune to all attacks. That would be the wrong engineering conclusion. The useful point is that quantum-derived physical identity raises the attacker’s burden. A counterfeiter must not only reproduce the appearance of a label or product. They must also reproduce a measurable microscopic signature that the authentication system can distinguish from a genuine one.

Figure 1: Physical identity versus copied information

Figure 1 shows why the identity source matters. Conventional identifiers can support traceability, but they remain vulnerable when the identifier itself can be copied. A physical identity system shifts authentication towards measurable object-level uniqueness. In a quantum ID or PUF-style model, the verifier checks a physical response rather than only checking a printed data string.

From lab concept to scalable authentication

A new authentication method only becomes useful when it fits the operational environment. For product authentication, that environment includes manufacturing, packaging, logistics, customs inspection, retailer checks, consumer verification, and exception handling.

This is where quantum IDs become interesting from a systems perspective. The challenge is not only to create a unique identity. The challenge is to create an identity that can be generated, registered, deployed, measured, compared, and trusted across real supply-chain conditions.

A scalable authentication workflow must answer practical engineering questions. How is the identity created? How is it registered? What measurement conditions are acceptable? How does the system handle damaged labels, partial reads, lighting variation, device variation, and repeated scans? How are false accepts and false rejects measured?

These questions determine whether quantum IDs remain a technical demonstration or become usable infrastructure.

Constraints, trade-offs, and risks

Quantum IDs offer a strong technical direction for anti-counterfeiting, but deployment still involves engineering trade-offs.

- The first trade-off is between uniqueness and readability. A physical signature must contain enough variation to distinguish genuine objects, but it must also remain readable under real-world conditions. Excessive sensitivity can increase false rejects. Insufficient sensitivity can weaken discrimination.

- The second trade-off is between security and operational simplicity. A more complex measurement process may improve assurance, but it can reduce deployability. A practical authentication system must work across real inspection environments without adding unnecessary friction.

- The third trade-off concerns enrolment and governance. A physical identity is only useful if the original registration process is trustworthy. Attackers do not need to defeat the underlying physics if they can compromise enrolment, database integrity, software workflows, or operational controls.

- The fourth trade-off concerns lifecycle management. Products may be scanned many times, under different conditions, by different users, and across different markets. The system must define how to handle damaged labels, ambiguous reads, revoked identifiers, duplicate scans, and suspected counterfeit events.

This is why “unclonable” should not remove the need for verification. It should sharpen the verification requirement.

Why verification thinking matters

Verification engineers routinely deal with conditions that authentication systems also face: corner cases, signal variation, thresholds, noise, coverage gaps, repeatability, and traceability from requirements to results. The same discipline applies to physical product identity.

A quantum ID system must control false accepts and false rejects. A “false accept” allows a counterfeit to appear genuine. A “false reject” challenges or blocks a genuine item. Both outcomes create risk, but they affect the system differently. False accepts weaken security. False rejects undermine operational trust.

Verification thinking should cover the complete authentication path. Teams must define expected response envelopes, test environmental variation, evaluate spoofing attempts, assess measurement repeatability, and maintain traceability between the physical identity and its digital record.

For semiconductor and systems teams, this is the most relevant point. A physical identity can strengthen the trust model, but the implementation still needs robust interfaces, repeatable measurement, secure data handling, and clear failure-mode analysis.

What this means for semiconductor and systems teams

Quantum IDs sit at the intersection of materials, optics, measurement, embedded systems, software, cloud infrastructure, and supply-chain operations. This makes the topic relevant beyond anti-counterfeiting alone.

- First, they reinforce the value of hardware-rooted identity. As products, components, medical devices, automotive systems, industrial equipment, and consumer goods move through longer supply chains, teams need stronger ways to prove provenance and authenticity.

- Second, they highlight the importance of measurement-aware security. Any system based on a physical response must handle noise, drift, calibration, environmental conditions, scanner variation, and software interpretation.

- Third, they extend verification beyond chip logic. Teams must verify the authentication workflow as a system. This includes physical identity generation, digital record management, scanner behaviour, failure modes, exception handling, and operational monitoring.

- Finally, quantum IDs show why technical advances need early scrutiny. A promising scientific principle can still fail if the interfaces, tolerances, economics, or operating assumptions do not scale.

About the Verification Futures session

Prof. Rob Young, Chief Scientist and Founder of Quantum Base, will present “You can’t copy this: Quantum IDs and the end of counterfeiting” at the Verification & Semiconductors Futures Conference UK 2026. The event is scheduled for Tuesday, 23 June 2026, at the University of Reading, UK, with the talk listed under the Startups and Breakthrough Technologies track.

The session connects quantum effects, physical identity, product authentication, and supply-chain trust in a way that should interest engineers responsible for system integrity, verification confidence, and technology deployment.

Conclusion

Quantum IDs represent a practical direction for anti-counterfeiting because they shift authentication from copyable information towards measurable physical identity. That shift matters for supply chains where conventional marks, labels, serial numbers, and codes can be duplicated or misused.

The engineering challenge is not only to create uniqueness. It is to preserve trust across the full authentication lifecycle: generation, registration, deployment, measurement, verification, exception handling, and audit.

For semiconductor and systems teams, the lesson extends beyond counterfeiting. As physical products become more connected, distributed, and security-sensitive, identity will increasingly depend on hardware-rooted trust. Quantum IDs show how deeply physics can be embedded in that trust model, provided the engineering system around it receives the same rigour as the technology itself.

Further engagement

View the full agenda and session timings on the Verification & Semiconductors Futures Conference UK 2026 page.

For related engineering context, see Alpinum’s events and training services.

Written by : Mike Bartley

Mike started in software testing in 1988 after completing a PhD in Math, moving to semiconductor Design Verification (DV) in 1994, verifying designs (on Silicon and FPGA) going into commercial and safety-related sectors such as mobile phones, automotive, comms, cloud/data servers, and Artificial Intelligence. Mike built and managed state-of-the-art DV teams inside several companies, specialising in CPU verification.

Mike founded and grew a DV services company to 450+ engineers globally, successfully delivering services and solutions to over 50+ clients.

Mike started Alpinum in April 2025 to deliver a range of start-of-the art industry solutions:

Alpinum AI provides tools and automations using Artificial Intelligence to help companies reduce development costs (by up to 90%!) Alpinum Services provides RTL to GDS VLSI services from nearshore and offshore centres in Vietnam, India, Egypt, Eastern Europe, Mexico and Costa Rica. Alpinum Consulting also provides strategic board level consultancy services, helping companies to grow. Alpinum training department provides self-paced, fully online training in System Verilog, UVM Introduction and Advanced, Formal Verification, DV methodologies for SV, UVM, VHDL and OSVVM and CPU/RISC-V. Alpinum Events organises a number of free-to-attend industry events

You can contact Mike (mike@alpinumconsulting.com or +44 7796 307958) or book a meeting with Mike using Calendly (https://calendly.com/mike-alpinum-consulting).

Stay Informed and Stay Ahead

Latest Articles, Guides and News

Explore related insights from Alpinum that dive deeper into design verification challenges, practical solutions, and expert perspectives from across the global engineering landscape.