A systems-level view from semiconductor engineering

The question of whether this new technology will take my job resurfaces whenever a general-purpose technology moves from research into routine industrial use. In semiconductor development, the issue is less about individual tasks and more about how uncertainty is resolved across a programme. As designs mature, risk concentrates rather than disperses, and decisions increasingly depend on integrated evidence drawn from multiple disciplines rather than isolated tool outputs.

Automation has long been used to manage this complexity. What has changed is the proximity of automation to engineering judgement rather than execution alone. AI-based tools are now applied to analysis, option exploration, prioritisation, and early-stage decision preparation. These activities influence how intent is interpreted, how trade-offs are framed, and which risks are surfaced for further investigation across the programme. They operate closer to judgment than earlier generations of execution-focused tooling.

In large semiconductor programmes, these effects become most visible as projects approach integration and sign-off. Evidence must be reconciled across hardware, software, verification, and system constraints, and residual risk must be explicitly accepted. Decisions at this stage carry programme-level consequences, emphasising disciplines in which evidence is integrated, assumptions are challenged, and outcomes are owned. Tools can accelerate the preparation of that evidence, but responsibility for accepting it remains human and programme-led.

5 Tips to protect your job and Opportunities to grow your career

In semiconductor engineering, job resilience and career progression are determined less by task execution and more by who owns responsibility, judgment, and system-level risk. As AI-assisted workflows expand, the nature of engineering work changes, but the locations where accountability sits do not.

1. Anchor your role at the system level

Engineers who operate across hardware, software, verification, and system constraints remain central as automation increases. AI can accelerate analysis and option exploration, but it cannot reconcile cross-domain trade-offs or accept programme-level risk. System integration and sign-off responsibilities, therefore, continue to define long-term relevance.

2. Prioritise decision quality over task throughput

As AI makes it easier to generate large volumes of results, the real constraint moves away from execution speed and toward engineering judgment. Engineers who frame problems, challenge assumptions, and determine when evidence is sufficient retain influence as output volume increases.

3. Maintain ownership of verification intent and risk acceptance

AI-assisted verification can expand test generation and failure analysis, but it does not define what constitutes adequate evidence. Verification intent, residual risk, and sign-off thresholds remain human responsibilities, grounded in system context and programme constraints.

4. Build cross-domain context rather than tool dependence

AI increases coupling across the engineering flow by accelerating iteration between disciplines. Engineers who understand interactions between architecture, implementation, verification, and system constraints are less exposed than those operating within narrow tool boundaries.

5. Learn to supervise AI outputs, not simply consume them

As automation scales, weak oversight becomes more expensive than slow execution, particularly once errors start propagating across a programme. Professional relevance increasingly depends on knowing when to trust automated outputs, when to challenge them, and how to govern their use safely within complex programmes.

The analysis below draws on historical precedent, market behaviour, and programme-level experience to explain why these effects persist.

Historical perspective: what previous technology shifts actually did

Semiconductor engineering has repeatedly absorbed new layers of abstraction. Each transition reduced manual effort in specific tasks while increasing the scale and ambition of the systems being built. Computer-aided design replaced manual drafting. Hardware description languages shifted logic design from schematic entry to behavioural description. Simulation, coverage-driven verification, and formal methods changed how correctness was evaluated and demonstrated.

At each step, concern focused on the same question: if tools can perform more of the work, will fewer engineers be required? In practice, that outcome did not materialise. What changed was not the demand for engineers, but the composition of engineering effort.

The reason is structural rather than sentimental. When the cost of producing a unit of engineering output falls, organisations do not typically freeze scope. They expand it. Shorter design cycles enable additional variants. Improved verification efficiency supports higher integration density. Reduced turnaround time allows programmes to attempt more aggressive performance, power, and reliability targets within the same schedule envelope.

As a result, system complexity increases rather than disappears. Each abstraction layer shifts effort away from execution and toward integration, validation, and decision-making under uncertainty. Engineers spend less time performing repetitive tasks and more time managing interactions across subsystems, interpreting results, and resolving trade-offs with real programme consequences.

The semiconductor industry provides consistent evidence of this pattern. Advances in EDA did not eliminate engineering roles. They enabled larger, more interdisciplinary teams to deliver increasingly complex chips, under tighter constraints and with higher consequences of failure. Automation expanded what could be built and verified, not the extent to which responsibility could be removed.

AI-assisted engineering follows the same economic and organisational logic. By reducing the marginal cost of analysis, exploration, and iteration, it increases the volume of designs, platforms, and configurations that teams attempt to bring to market. The result is not less engineering work, but more systems to integrate, verify, and sign off.

Evidence that this wave feels different

The historical pattern in semiconductor automation is well established. As abstraction increases, manual effort at the task level declines, while system scope, integration complexity, and programme ambition expand. The current unease does not stem from automation itself, but from the proximity of AI-based tools to activities that influence engineering judgement rather than execution speed.

A distinguishing feature of recent AI adoption is the class of work being augmented. Many tools now support activities such as specification synthesis, option exploration, code scaffolding, and verification planning. These functions sit upstream of implementation and downstream of intent, shaping how problems are framed and how solution spaces are explored. As a result, AI is interacting with decision-adjacent workflows that have historically been governed directly by engineers rather than tooling.

The pace of adoption also differs from earlier technology shifts. Cloud delivery and software-only deployment allow new capabilities to be introduced without changes to physical infrastructure or long qualification cycles. As a result, adoption timelines compress compared with earlier technology shifts, which often unfolded over multiple process nodes or tool generations, increasing organisational visibility and accelerating exposure.

Finally, many AI systems are not confined to a single stage of the engineering flow. The same underlying models are applied across requirements analysis, design exploration, documentation, test generation, and triage. This cross-cutting use creates the impression of broad occupational reach, particularly in roles where outputs are primarily digital and intermediate rather than physical.

Together, these factors explain why the current transition feels qualitatively different from earlier waves of automation. They describe a shift in where assistance is applied and how quickly it propagates, not a fundamental change in how accountability, system responsibility, or engineering ownership are assigned.

White-collar engineering work under AI assistance

In operational use, AI alters how engineering work is distributed rather than who carries responsibility for outcomes. The primary effect is a rebalancing of effort across tasks, with automation absorbing portions of analysis and generation while accountability remains anchored at the system level.

In verification, AI-assisted tools are increasingly used for activities such as test stimulus generation, coverage exploration, log interrogation, and failure classification. These capabilities reduce the manual effort required to process large volumes of data and explore state space more efficiently. They do not, however, establish verification intent, define risk acceptance thresholds, or determine sufficiency of sign-off. Those decisions remain dependent on system context, programme constraints, and engineering judgement exercised across disciplines.

A similar pattern is visible in software and firmware development. AI can accelerate boilerplate creation, refactoring, and exploratory implementation. The result is faster iteration, but not resolution of architectural trade-offs, performance boundaries, safety constraints, or long-term maintainability considerations. These aspects remain coupled to system knowledge, lifecycle awareness, and experience accumulated over multiple programmes.

At the programme level, the net effect is increased leverage rather than displacement. Individual engineers can evaluate a broader set of options, and teams can explore larger design spaces within fixed schedules and cost envelopes. This shift enables higher functional ambition and greater integration density without reducing the need for oversight at decision points.

Recent AI-driven design initiatives, including emerging frontier lab efforts, reinforce this pattern [1]. These platforms apply machine-learning techniques across architecture exploration, physical design optimisation, and iteration planning, with the explicit aim of compressing multi-year chip development cycles. These efforts explicitly preserve human ownership of architectural decisions while compressing the feedback cycle between design intent, implementation trade-offs, and downstream verification consequences. Even in these environments, responsibility for architectural coherence, verification strategy, and system-level risk remains firmly with human engineering teams.

Cheaper development leads to more chips, not fewer engineers

A persistent counterweight to large-scale displacement in semiconductor engineering is demand elasticity. When development costs fall, the historical outcome has not been fewer products being built. Instead, organisations broaden the scope of what they choose to design, integrate, and deploy.

As non-recurring engineering costs decline, programmes tend to pursue more variants, more market-specific configurations, and shorter product lifecycles. This behaviour is already visible across domain-specific accelerators, automotive SoCs, and edge devices. In these classes of systems, differentiation increasingly comes from configuration choices, integration strategy, and software coupling, rather than from a single monolithic design. Lower barriers to iteration do not simplify programmes; they expand their scope.

AI-assisted development further pushes this dynamic by reducing the effort required for each design iteration. Exploration cycles shorten, and alternative architectures can be evaluated with less upfront cost. Teams can therefore examine a wider set of design options within a fixed schedule. The practical effect is not a reduction in engineering work, but an increase in the number of chips, platforms, and system configurations that reach implementation.

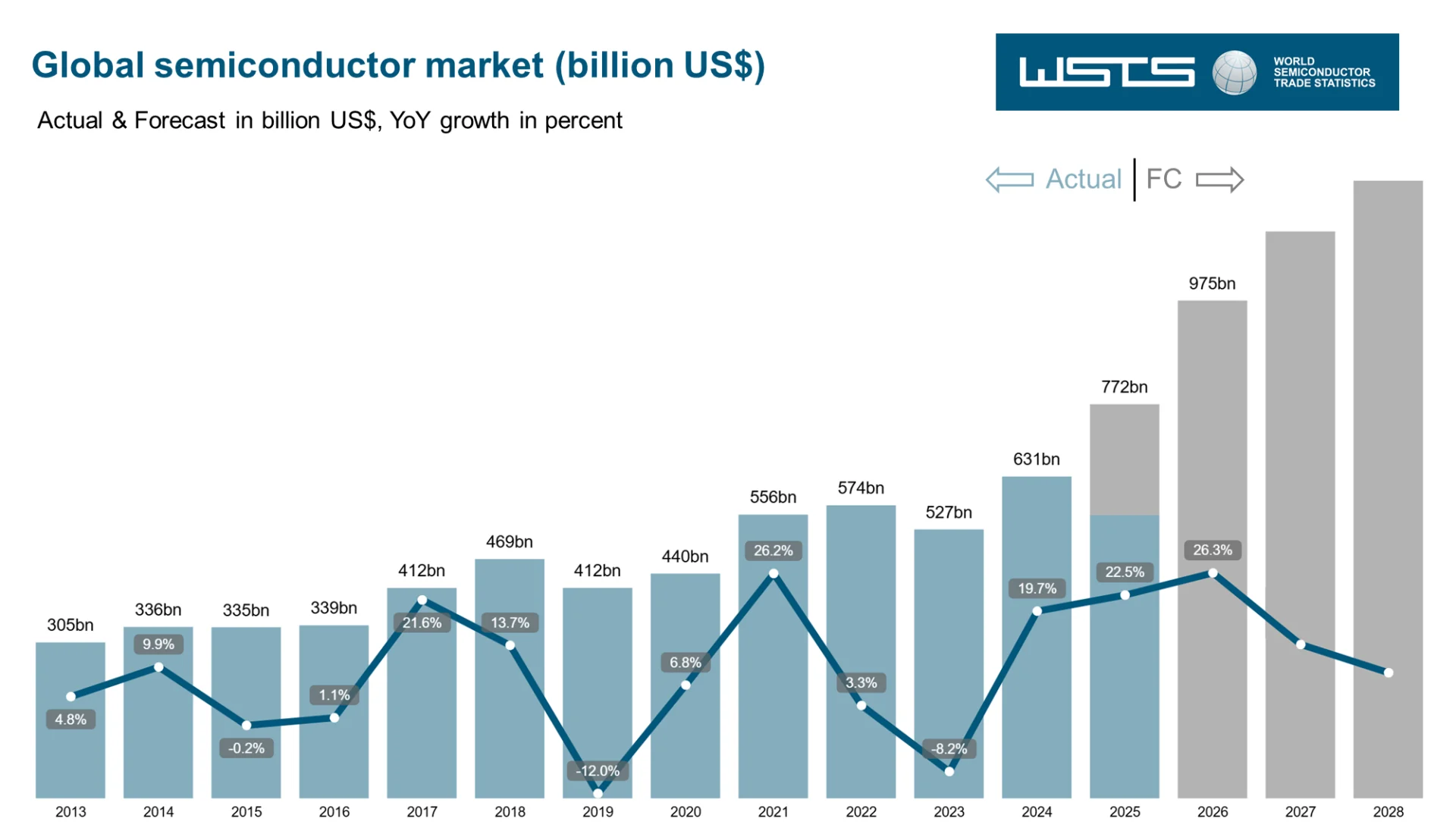

Market data is consistent with this expansion. WSTS Autumn 2025 forecasts attribute current growth primarily to logic and memory devices, aligned with AI-driven workloads and continued investment in computing and data-centre infrastructure [2]. WSTS projects worldwide semiconductor revenue to reach approximately USD 772 billion in 2025 and grow beyond USD 975 billion in 2026, placing the industry on a clear trajectory toward the one-trillion-dollar mark [2].

Figure 1: Global semiconductor market growth trajectory toward USD 1 trillion. Source: wsts.org

This scale expansion does not simplify engineering effort. It multiplies the number of integration boundaries, verification conditions, and system-level trade-offs that must be resolved. As a result, demand increases for engineers capable of operating across hardware, software, verification, and system requirements, particularly where correctness, performance, and risk acceptance converge under programme-level constraints.

Market signals and realistic predictions

Market forecasts consistently predict productivity gains rather than wholesale job elimination in engineering-intensive sectors. Analyst outlooks emphasise role reshaping rather than role removal.

Deloitte’s semiconductor industry outlook identifies AI as a structural growth driver rather than a labour-replacing force. It links rising automation with increased design activity, higher system complexity, and persistent talent constraints, rather than a reduction in engineering demand [3].

This view is reinforced by industry-wide shipment and revenue forecasts published by the World Semiconductor Trade Statistics organisation, which indicate sustained long-term market growth rather than contraction [2].

Regulated industries further limit the scope for displacement. In automotive, aerospace, medical, and infrastructure systems, certification requirements, traceability obligations, and legal accountability constrain the extent to which automation can replace human judgment. AI may support evidence generation and analysis, but responsibility for decisions and sign-off cannot be delegated to a model.

Taken together, these regulatory and accountability constraints establish a practical floor under demand for qualified professionals who can interpret results, challenge assumptions, and validate AI-assisted outputs within a system and programme context.

Risk, accountability, and why humans remain central

AI systems do not own outcomes. They do not carry legal, ethical, or organisational responsibility. In system engineering, someone must decide when evidence is sufficient, when uncertainty is acceptable, and when residual risk can be tolerated.

As AI increases output volume and speed, the importance of judgment increases rather than diminishes. Poorly governed automation accelerates error propagation across design, verification, and operational boundaries. The dominant risk shifts from execution effort to oversight failure.

From a programme perspective, AI changes how work is performed, but not who is accountable. Responsibility remains with engineers and programme leaders who set intent, govern tool usage, challenge outputs, and accept the consequences of decisions made under uncertainty and incomplete information.

Practical ways to protect and future-proof technical roles

Resilience in technical roles does not come from resisting new tools. It comes from operating at points in the engineering process where intent is defined, trade-offs are resolved, and risk is accepted.

Engineers who focus on system understanding, cross-domain integration, and decision-making under uncertainty remain difficult to automate because they depend on context rather than execution speed. By contrast, tasks that are narrow, repetitive, and weakly coupled to system-level consequences are more exposed as automation capability increases.

Practical steps include:

- Developing system-level literacy beyond a single tool or language

- Understanding verification intent, not just execution

- Engaging with architecture, trade-off analysis, and risk assessment

- Learning how to supervise and validate AI-assisted workflows

AI literacy itself becomes part of professional competence. Knowing when to trust a tool, when to challenge it, and how to integrate it safely into workflows is increasingly differentiating.

Conclusion: the question behind the question

AI will alter how engineering work is performed. That outcome is already visible across design, verification, and integration workflows. The available evidence, however, does not indicate a near-term collapse of white-collar engineering roles within the semiconductor industry, particularly in domains characterised by high complexity, tight coupling, and explicit accountability.

The primary effect of AI is to compress effort at the task level while expanding ambition at the system level. Historically, this shift has led organisations to pursue larger designs, denser integration, and more aggressive schedules rather than to reduce engineering involvement. As productivity increases, the volume of decisions that require context, judgement, and risk ownership also increases.

Taken together, these dynamics reframe the underlying concern. The central question is not whether Artificial Intelligence (AI) removes engineering roles, but which forms of expertise remain constrained as scale and complexity grow. Skills grounded in system understanding, cross-domain integration, verification intent, and decision-making under uncertainty continue to define where engineering effort concentrates, even as tooling evolves.

Continue Exploring

If you would like to explore more work in this area, see the related articles in the AI and ML Overview section on the Alpinum website:

https://alpinumconsulting.com/resources/blog/ai-ml-overview/

For discussion, collaboration, or technical engagement, contact Alpinum Consulting here:

https://alpinumconsulting.com/contact-us/

References

[1] Semiconductor Digest. Recursive Intelligence launches Frontier AI Lab to accelerate AI-driven chip design.https://www.semiconductor-digest.com/ricursive-intelligence-launches-frontier-ai-lab/ [2] World Semiconductor Trade Statistics (WSTS). Global Semiconductor Market Approaches $1T in 2026. WSTS Press Release, Autumn 2025.

https://www.wsts.org/76/Global-Semiconductor-Market-Approaches-1T-in-2026

[3] Deloitte. 2024 Semiconductor Industry Outlook: Trends and predictions for a cyclical industry.https://www.deloitte.com/global/en/Industries/tmt/perspectives/semiconductor-industry-outlook.html

Written by : Mike Bartley

Mike started in software testing in 1988 after completing a PhD in Math, moving to semiconductor Design Verification (DV) in 1994, verifying designs (on Silicon and FPGA) going into commercial and safety-related sectors such as mobile phones, automotive, comms, cloud/data servers, and Artificial Intelligence. Mike built and managed state-of-the-art DV teams inside several companies, specialising in CPU verification.

Mike founded and grew a DV services company to 450+ engineers globally, successfully delivering services and solutions to over 50+ clients.

Mike started Alpinum in April 2025 to deliver a range of start-of-the art industry solutions:

Alpinum AI provides tools and automations using Artificial Intelligence to help companies reduce development costs (by up to 90%!) Alpinum Services provides RTL to GDS VLSI services from nearshore and offshore centres in Vietnam, India, Egypt, Eastern Europe, Mexico and Costa Rica. Alpinum Consulting also provides strategic board level consultancy services, helping companies to grow. Alpinum training department provides self-paced, fully online training in System Verilog, UVM Introduction and Advanced, Formal Verification, DV methodologies for SV, UVM, VHDL and OSVVM and CPU/RISC-V. Alpinum Events organises a number of free-to-attend industry events

You can contact Mike (mike@alpinumconsulting.com or +44 7796 307958) or book a meeting with Mike using Calendly (https://calendly.com/mike-alpinumconsulting).

Stay Informed and Stay Ahead

Latest Articles, Guides and News

Explore related insights from Alpinum that dive deeper into design verification challenges, practical solutions, and expert perspectives from across the global engineering landscape.