From Experimentation to Enterprise Backbone

Five key learning points for enterprise AI leaders in 2026

| Key learning point | Link to detailed explanation | External reference |

| AI success in 2026 is determined by operating model and leadership readiness, not model capability. | Section “The AI Value Paradox: Rising Spend, Stalled Outcomes” | McKinsey – The State of AI 2025 |

| Most enterprise AI programmes fail in “pilot purgatory” due to governance and accountability gaps. | Section “Pilot Purgatory: The Dominant Enterprise Failure Mode” | Gartner CIO Report (via AWS, 2025) |

| AI has become a leadership discipline, requiring explicit ownership of decisions, risk, and outcomes. | Section “Leadership Readiness Is the Real Constraint” | BCG – The Widening AI Value Gap |

| Scalable AI requires coordinated change across operating models, governance, skills, and infrastructure. | Section “The Seven Dimensions of AI Execution Maturity” | IBM IBV – The AI Multiplier Effect |

| Responsible AI and governance are execution enablers, not compliance overheads, at enterprise scale. | Section “Governance Without Paralysis” | NIST AI Risk Management Framework |

2026 Is the Inflection Point for Enterprise AI

By 2026, artificial intelligence will no longer be judged by experimentation, innovation, theatre, or pilot velocity. It will be judged by whether it delivers reliable, repeatable business outcomes at scale. Across industries, AI adoption has accelerated faster than organisational readiness. While model capability, tooling, and infrastructure continue to advance rapidly, execution maturity has not kept pace. The result is a widening gap between AI investment and realised value, particularly at enterprise scale.

McKinsey’s State of AI 2025 survey shows that although AI adoption continues to grow across functions, only a minority of organisations report a material impact on earnings or a sustained competitive advantage. Technology constraints do not drive this gap; it stems from weaknesses in operating models, leadership alignment, governance, and outcome measurement.

Stanford’s AI Index Report 2025 reaches a similar conclusion, noting that AI has entered a new phase in which evaluation, accountability, and execution discipline matter more than experimentation or evangelism. Figure 1 illustrates this transition.

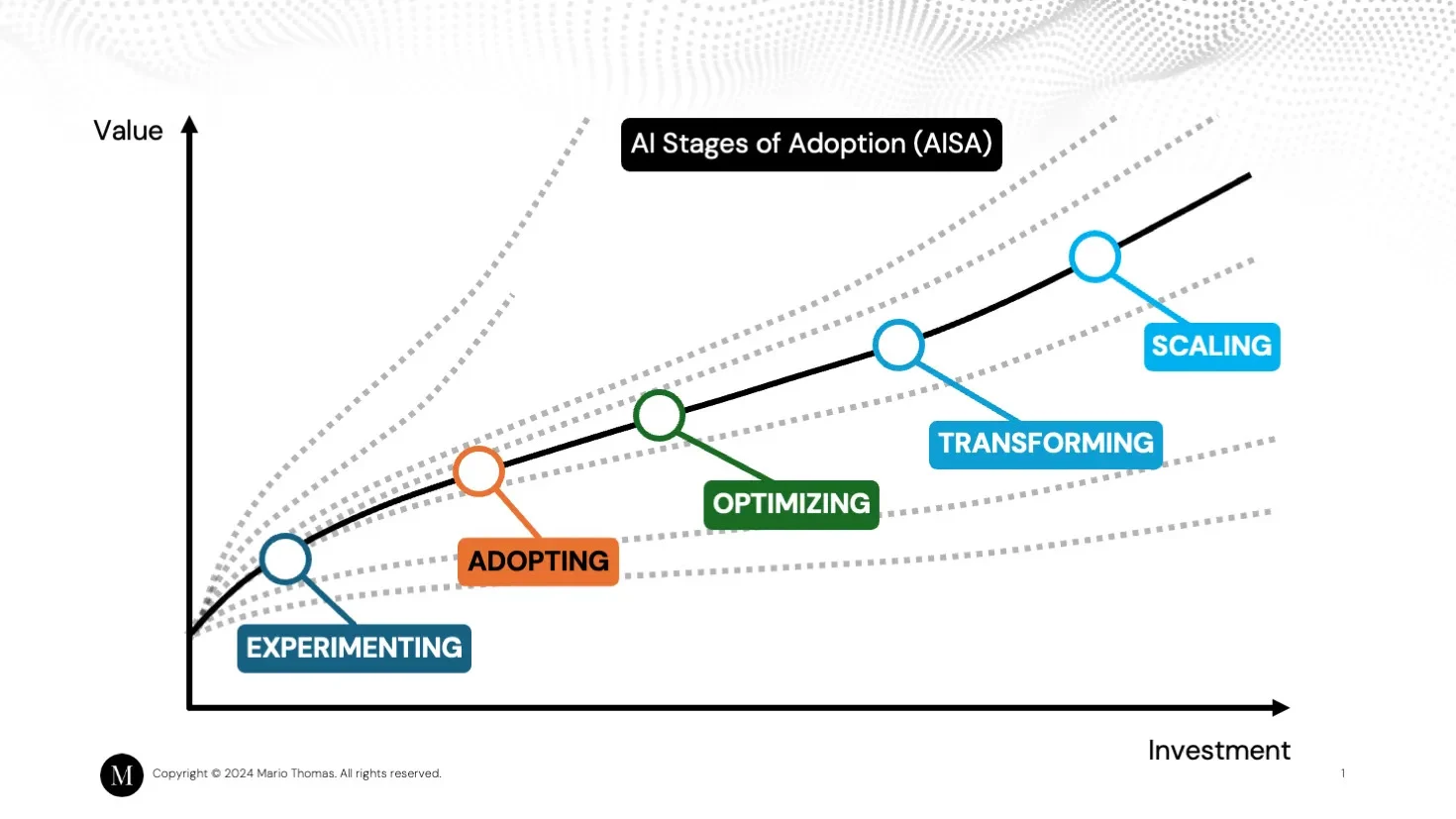

Figure 1: Enterprise AI adoption curve showing the transition from experimentation to scalable execution.

Source: Mario Thomas

As shown in Figure 1, enterprise AI programmes progress through a clear progression from experimentation and early adoption to optimisation, transformation, and, finally, scaling. The inflection occurs when organisations shift from isolated pilots to structured execution, where AI is embedded into core workflows, consistently and measured against business outcomes rather than technical success alone. Most stalled AI programmes fail at this transition point, not because the models underperform, but because the organisation is unprepared to industrialise them.

By 2026, this transition is no longer optional. AI becomes structural, embedded into decision-making, operations, and value creation. Organisations that fail to cross the execution threshold will not simply move more slowly; they will increasingly struggle to justify continued AI investment.

The AI Value Paradox: Rising Spend, Stalled Outcomes

Enterprise spending on AI continues to rise. According to McKinsey, 92% of companies increased AI investment in 2025, yet only a small proportion report measurable profit-and-loss impact [McKinsey, 2025].

This paradox is now visible across industries. Analysis cited by Amazon Web Services (AWS) shows that 42% of companies abandoned most AI initiatives in the first half of 2025, up from 17% the previous year [S&P Global via AWS, 2025]. Gartner further predicts that over 40% of agentic AI projects will be cancelled by 2027 [Gartner, cited by AWS, 2025].

The issue is not model capability.

AI programmes fail because organisations do not change how decisions are made, how accountability is assigned, or how value is measured.

This AI programme failure is not a technology bottleneck. It is an operating model failure.

Pilot Purgatory: The Dominant Enterprise Failure Mode

The most common AI failure pattern in large organisations is not outright collapse, but stagnation. AI pilots succeed technically. Proofs of concept demonstrate feasibility. Tools are deployed. Yet programmes fail to scale.

Microsoft’s 2025 Work Trend Index shows that while 24% of organisations have deployed AI company-wide, only 12% remain explicitly in pilot mode. Despite this apparent progress, Gartner reports that 70% of generative AI initiatives do not move beyond pilots due to governance and data readiness failures [Microsoft, 2025; Gartner CIO Report].

Common symptoms include:

Pilot purgatory persists because enterprises optimise locally while failing systemically.

Leadership Readiness Is the Real Constraint

AI Is Now a Leadership Discipline

In 2026, AI will expose weak leadership rather than weak technology. Boston Consulting Group (BCG) describes a growing “AI value gap” between organisations that redesign for AI and those that merely deploy tools [BCG, 2025]. The difference is not data science capability, but executive ownership.

AI now directly affects:

As a result, AI cannot be delegated to innovation teams or technical centres of excellence. Microsoft’s CIO playbook emphasises that AI transformation requires CIOs to operate as business leaders, orchestrating cross-functional alignment rather than managing infrastructure alone [Microsoft, 2025].

Decision Rights Must Be Explicit

Successful AI programmes define:

Stanford researchers note that lack of transparency and unclear accountability are now primary barriers to responsible AI deployment [Stanford HAI, 2025].

In 2026, ambiguity becomes risk.

From Tools to Operating Models

If 2025 was about models that could talk, 2026 is about systems that can act.

AI systems are increasingly:

This shift fundamentally changes operating models. According to McKinsey, organisations that achieve sustained AI advantage rethink business models, cost structures, and decision flows, rather than layering AI onto existing processes [McKinsey, 2025].

Incremental adoption fails because legacy workflows cannot absorb autonomous systems.

The Seven Dimensions of AI Execution Maturity

Evidence across AWS, IBM, Accenture, and BCG points to the same conclusion: AI success requires synchronised transformation across multiple dimensions.

Drawing from these sources, seven execution dimensions consistently determine outcomes:

- AI vision tied to business value, not experimentation

- Process redesign for human-AI collaboration

- Leadership-led change management

- Data and infrastructure readiness

- Skills and organisational capability

- Governance, risk, and trust mechanisms

- Industrialisation and scale discipline

IBM’s 2025 CDO study shows that organisations integrating AI with decision-ready data see significantly higher growth multipliers than those treating AI as a standalone capability [IBM IBV, 2025].

Weakness in any single dimension constrains the entire system.

Governance Without Paralysis

AI governance is no longer optional. But by 2026, poorly designed governance is one of the primary reasons AI programmes fail to scale. Many organisations respond to AI risk by centralising control, introducing heavyweight approval processes, and slowing deployment. Others decentralise entirely, allowing teams to experiment freely but leaving material gaps in accountability, compliance, and trust. Both approaches fail at scale.

Evidence from AWS case studies shows that organisations achieving sustained AI value adopt balanced governance models. These models combine centralised guardrails for risk, compliance, and trust with federated execution that allows business units to deploy AI rapidly within clear boundaries. This approach consistently enables faster delivery than either rigid centralisation or uncontrolled decentralisation [AWS, 2025].

The NIST AI Risk Management Framework reinforces this principle. Rather than treating governance as a control function, NIST frames trustworthiness, transparency, and accountability as enablers of adoption. Clear decision rights, explainability expectations, and escalation paths reduce uncertainty, accelerate approvals, and increase executive confidence in deploying AI into mission-critical workflows [NIST AI RMF, 2023].

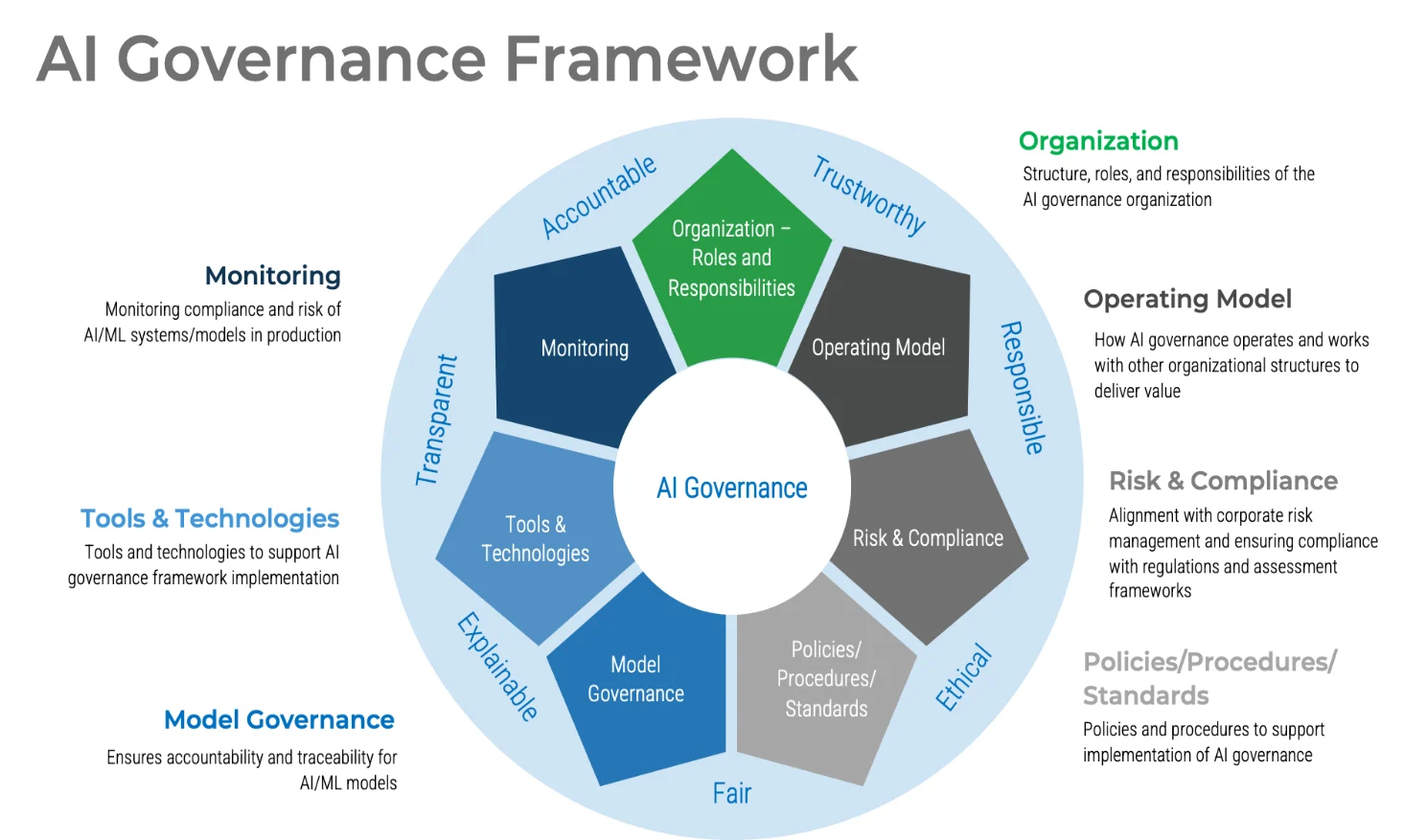

Figure 2 illustrates how effective AI governance operates as an integrated system, rather than a compliance overlay.

Figure 2: AI Governance Framework for Enterprise-Scale Deployment. Source: techcrunch.com

Figure 2 shows a governance model in which organisational roles, operating models, risk and compliance, policies and standards, model governance, tools and technologies, and monitoring mechanisms are integrated into a single execution framework. Rather than slowing delivery, this structure removes ambiguity by making responsibilities explicit and decision flows visible across the AI lifecycle.

What effective governance enables in practice

Well-designed AI governance does three things simultaneously:

By 2026, the organisations that scale AI successfully are not those with the most permissive governance, nor the strictest. They are the ones that treat governance as execution infrastructure, a system that makes responsible AI deployment faster, safer, and repeatable.

Measuring What Matters: Redefining AI ROI

Traditional cost-based ROI models break down under the economics of AI. AWS and BCG both highlight that outcome-anchored metrics outperform cost tracking in predicting AI success [AWS, 2025; BCG, 2025].

Effective organisations track:

Microsoft Copilot Analytics similarly frames success in terms of readiness, adoption, and impact, rather than tool utilisation alone [Microsoft, 2025].

Infrastructure Reality: AI Readiness Is Not Cloud Readiness

AI workloads stress infrastructure differently from traditional cloud systems. Google Cloud’s 2025 State of AI Infrastructure report shows that while 98% of organisations are exploring generative AI, only 39% have deployed it in production, citing data quality, security, and cost as primary blockers [Google Cloud, 2025].

Key constraints include:

Infrastructure misalignment is now a top-tier execution risk.

Responsible AI as a Scale Enabler

Responsible AI is increasingly recognised as a prerequisite for scale, not a compliance afterthought. The World Economic Forum reports that fewer than 1% of organisations have fully operationalised responsible AI, despite widespread adoption [WEF, 2025].

Stanford and NIST both emphasise that trust determines adoption velocity. Systems that cannot be explained, governed, or audited will not scale in regulated or mission-critical environments [Stanford HAI, 2025; NIST, 2023].

What the Winners Are Doing Differently

Across McKinsey, BCG, IBM, AWS, and Accenture, high-performing organisations share common traits:

BCG reports that organisations succeeding with AI achieve 45% greater cost savings and 60% higher revenue growth compared to laggards [BCG, cited by AWS, 2025].

The 2026 Leadership Test

AI will not wait for organisational comfort. By 2026, technology will no longer be the limiting factor. Leadership readiness, operating-model clarity, and accountability will determine outcomes. AI does not fail because of technology; it fails because of leadership. For organisations prepared to make AI a core business discipline, 2026 represents a competitive inflection point.

Every wave of technology creates this moment. Tools mature. Leadership becomes the constraint. The shift now is from asking what AI can do to asking whether leaders can govern, sequence, and scale it with intent. For those who are not, it will expose weaknesses that cannot be deferred any longer.

Continue Exploring

If you would like to explore more work in this area, see the related articles in the AI and ML Overview section on the Alpinum website:

👉 https://alpinumconsulting.com/resources/blog/ai-ml-overview/

For organisations seeking decision-grade clarity on AI execution, governance, and scale:

👉 https://alpinumconsulting.com/contact-us/

Written by : Mike Bartley

Mike started in software testing in 1988 after completing a PhD in Math, moving to semiconductor Design Verification (DV) in 1994, verifying designs (on Silicon and FPGA) going into commercial and safety-related sectors such as mobile phones, automotive, comms, cloud/data servers, and Artificial Intelligence. Mike built and managed state-of-the-art DV teams inside several companies, specialising in CPU verification.

Mike founded and grew a DV services company to 450+ engineers globally, successfully delivering services and solutions to over 50+ clients.

Mike started Alpinum in April 2025 to deliver a range of start-of-the art industry solutions:

Alpinum AI provides tools and automations using Artificial Intelligence to help companies reduce development costs (by up to 90%!) Alpinum Services provides RTL to GDS VLSI services from nearshore and offshore centres in Vietnam, India, Egypt, Eastern Europe, Mexico and Costa Rica. Alpinum Consulting also provides strategic board level consultancy services, helping companies to grow. Alpinum training department provides self-paced, fully online training in System Verilog, UVM Introduction and Advanced, Formal Verification, DV methodologies for SV, UVM, VHDL and OSVVM and CPU/RISC-V. Alpinum Events organises a number of free-to-attend industry events

You can contact Mike (mike@alpinumconsulting.com or +44 7796 307958) or book a meeting with Mike using Calendly (https://calendly.com/mike-alpinumconsulting).

Stay Informed and Stay Ahead

Latest Articles, Guides and News

Explore related insights from Alpinum that dive deeper into design verification challenges, practical solutions, and expert perspectives from across the global engineering landscape.